Since I always struggled to keep up with understanding the physical meaning of various matrix operations, despite multiple attempts to get into machine learning over the years, I was always turned away at the door. By chance, a colleague recommended MIT’s classic linear algebra open course. After listening to a few lectures, it was quite exhilarating; the previously tightly shut door seemed to open a crack.

Therefore, this series will share some interesting points from the course in each article. To avoid being obscure, each chapter will be as context-independent and concise as possible, so please enjoy with confidence. Consequently, this series will sacrifice some precision and is not systematic; it merely aims to spark a little interest. Note: Examples are all generated by KimiChat.

Author: 木鸟杂记 https://www.qtmuniao.com/2024/06/29/interesting-linear-algebra-1/ please indicate the source when reposting

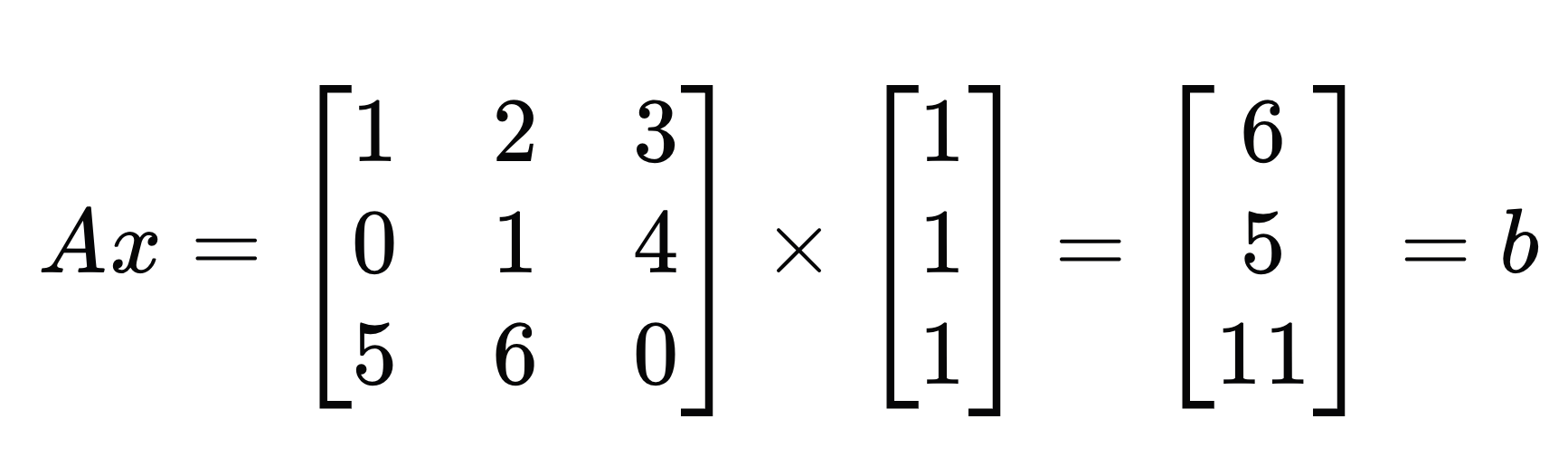

Right-multiplying a Column Vector

la-Ax-b.png

la-Ax-b.png

For a square matrix A, right-multiplying it by a column vector x can be understood as: using the values in x as weights to perform a linear combination of the column vectors in A, resulting in a new column vector b.

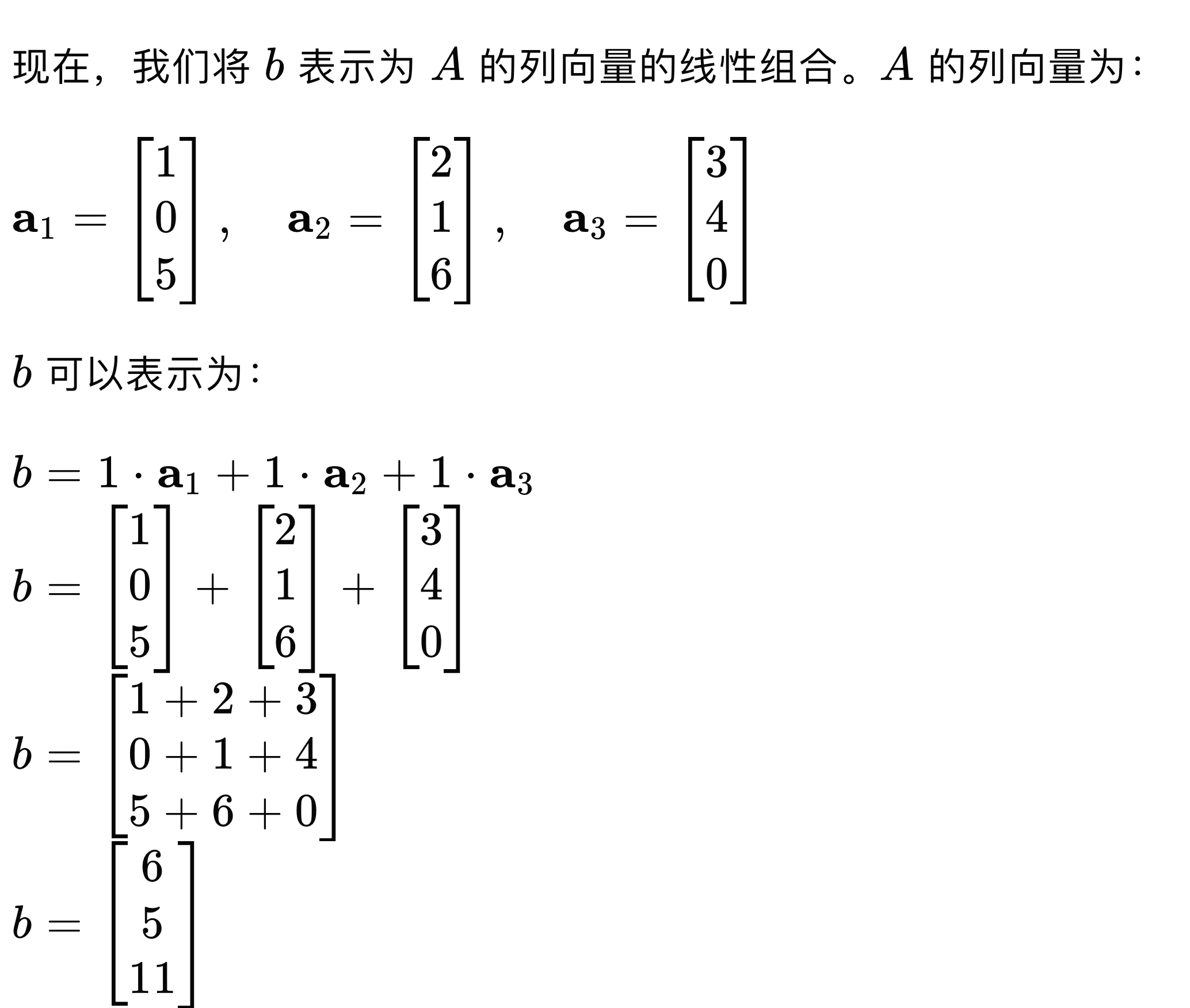

Using this understanding to break down the figure above:

la-b-A.png

la-b-A.png

Note: This requires certain properties, such as A being an invertible matrix or a non-singular matrix, but we won’t delve into these terms here. Those interested can watch the video.

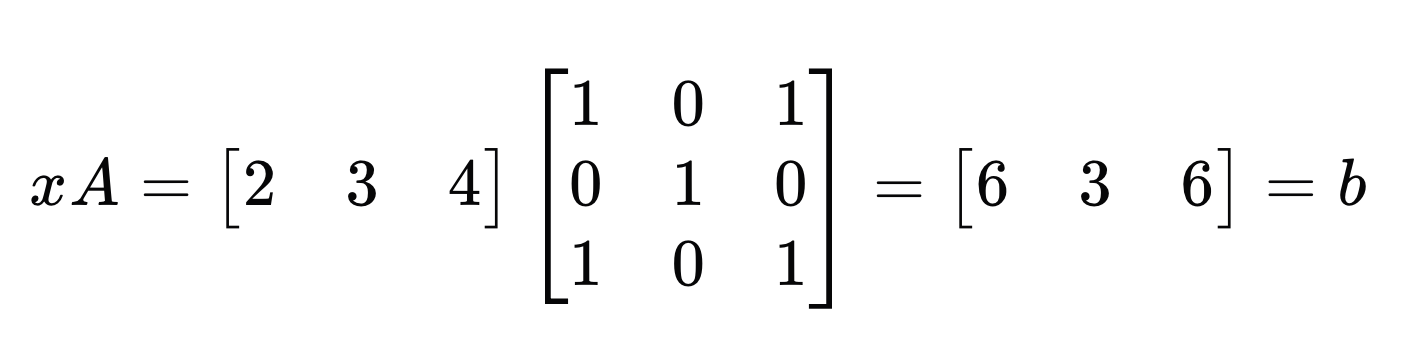

Left-multiplying a Row Vector

la-matrix-vector.png

la-matrix-vector.png

For a square matrix A, left-multiplying it by a row vector x can be understood as: using the values in x as weights to perform a linear combination of the row vectors in A, resulting in a new row vector b.

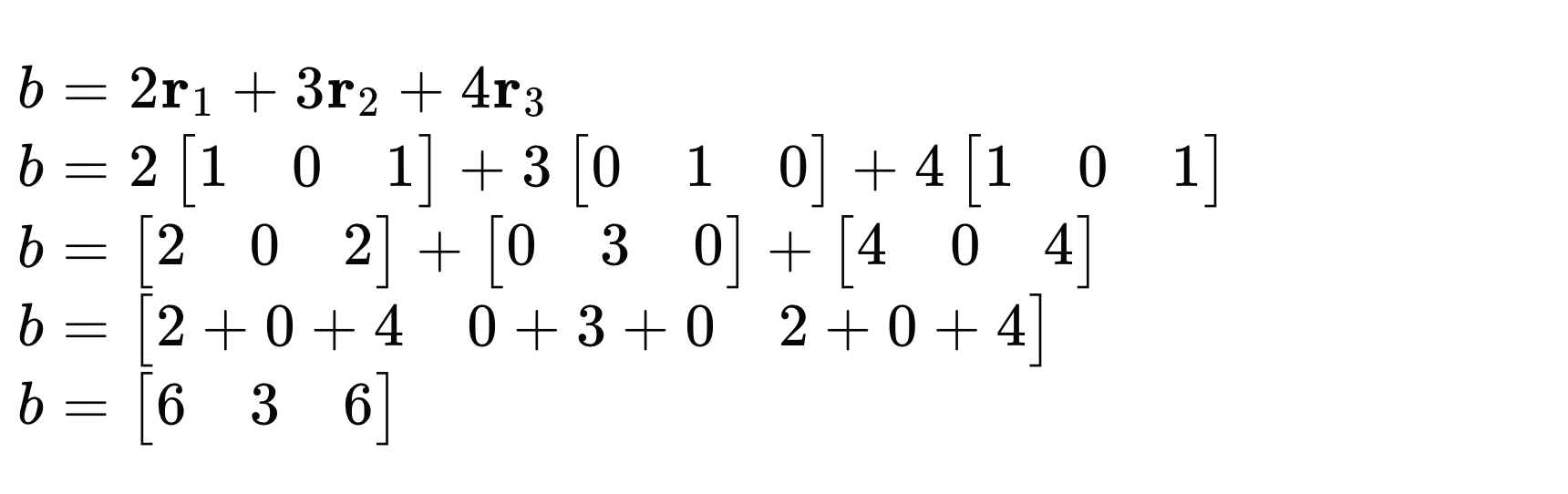

Breaking down the process:

la-b-linear.png

la-b-linear.png

Extension

With the above intuition, we can extend it to break down matrix multiplication.

For example, using right-multiplication by row vectors to break it down.

A * X = B

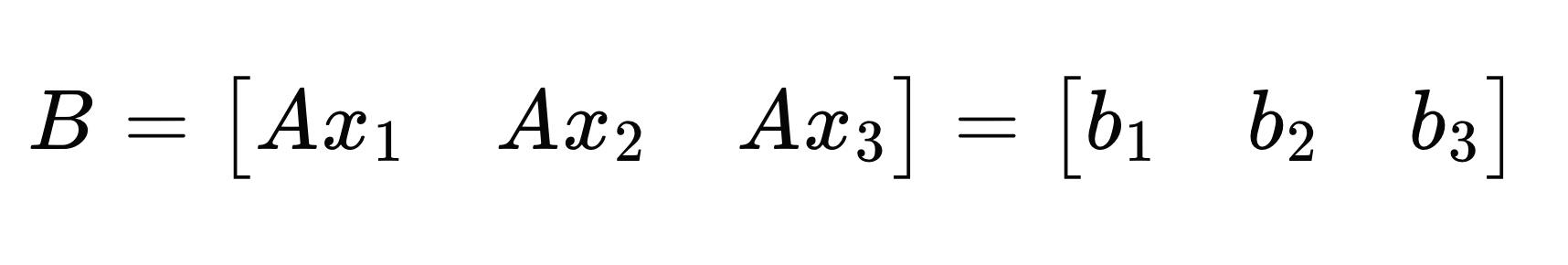

It can be broken down as:

la-B-as-A.png

la-B-as-A.png

That is, using the three columns in X to perform linear combinations on A respectively, obtaining the three columns in the result B.

Similarly, you can also use left-multiplication by row vectors to break down the above multiplication; you can try it yourself.

Summary

Of course, sometimes this way of thinking might make simple operations more complicated. But as you learn more, you’ll discover that for the same formula in linear algebra, there are multiple “physical interpretations,” and each interpretation has its specific applicable scenarios. Accumulating more tools in your toolbox will make us more adept at matrix operations, this kind of high-dimensional thinking.