6.824-schedule.png

6.824-schedule.png

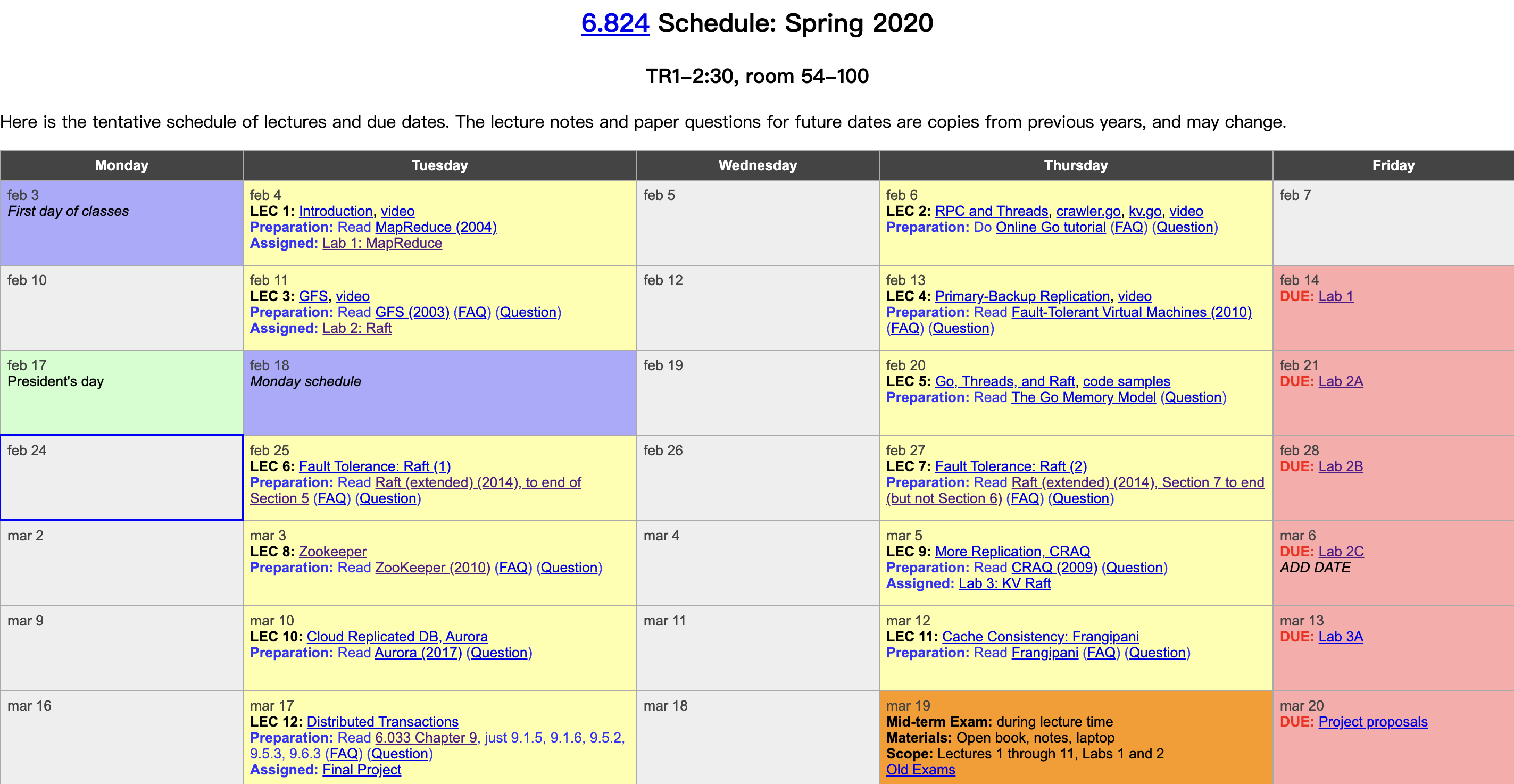

MIT finally released in-class video materials on YouTube this year. I had followed about half of this course before, and this year I plan to watch the videos and write some lecture notes. The course follows the thread of distributed systems fundamentals: fault tolerance, replication, and consistency, with carefully selected industrial-grade system papers as the main line, supplemented with detailed reading materials and sophisticated course labs, bridging academic theory and industrial practice. It is truly a rare gem of a distributed systems course. Course videos: Youtube, Bilibili. Course materials: 6.824 homepage. This is the fifth lecture note, including two parts: the first part is given by a TA about some Go primitives, design patterns, and practical techniques that will be used in lab 2, including memory models, goroutines and closures, time library, locks, condition variables, channels, signals, parallelism, and some common tools, etc. The second part is given by two other TAs sorting out some common bugs and debugging methods in Raft.

Author: Woodpecker Notes https://www.qtmuniao.com/2020/04/27/6-824-video-notes-5-go-concurrency/, please cite the source when reposting

Note: A goroutine is a lightweight thread. The terms goroutine and thread are used interchangeably below, both referring to threads in Go, although they are not completely equivalent.

Memory Model

Using threads serves two purposes:

- Improve performance by utilizing multiple cores.

- Construct code more elegantly.

In the labs for this course, we do not require using threads to maximize performance; we only require program correctness.

The same applies to the use of locks: we do not require fine-grained locking to boost performance; you may use coarse-grained locking to simplify code.

The memory model mainly discusses the ordering of code execution when multiple threads run. The main conclusion is that within a single thread, execution effects are guaranteed to be consistent with the sequential order of code statements. However, across threads, without explicit synchronization (via locks or channels), the order of statement execution cannot be guaranteed. That’s roughly what the memory model covers. The focus of this lecture is on exploring some typical code patterns that may be used in the labs.

Goroutine and Closure

Using for loops + goroutine can naturally express the process where a Leader sends messages to Followers in parallel. But note that when the loop variable (i in the example below) is referenced in a child goroutine, it’s best to make a copy beforehand (usually by passing it as a function parameter or copying it inside the loop body). In addition, WaitGroup is often used in the parent goroutine to blockingly wait for a group of child goroutines to finish. Example:

1 | func main() { |

Time Library

Using Go’s standard library time, you can conveniently implement doing something periodically, such as the heartbeat logic in Raft.

1 | func periodic() { |

When Raft is killed, you may want to terminate all background threads. You can use a shared variable as the loop exit condition to achieve this.

1 | var done bool |

It should be noted that when modifying and reading the shared variable done, both need to be wrapped with the lock sync.Mutex. This forces synchronization between multiple threads through sync.Mutex (one goroutine’s mu.Unlock will wake up another goroutine blocked on mu.Lock) to ensure that the main thread’s modification to done will definitely be seen by the child thread. Otherwise, without any synchronization measures, due to variable caching issues inside multiple threads, Go’s memory model does not strictly guarantee visibility of shared variables.

Mutex

There are mainly two situations where locks need to be used:

- Ensure visibility of shared variables among multiple threads.

- Ensure atomicity of a code block (it won’t be interleaved with statements in other goroutines).

Of course, these two situations are often one and the same.

Due to the fanciness of Go’s memory model, it is best to protect all accesses to shared variables with locks; otherwise the multithreaded code you write is likely to exhibit some hard-to-find and debug issues. Below is an example of using multiple threads to increment a shared variable without locking:

1 | func main() { |

The reason the final counter value is not 1000 is twofold:

counter = counter + 1is not atomic after being compiled into CPU instructions; parallel execution may produce interleaving.- After

counteris modified, it may not be seen by other threads in time, thus incrementing the old value.

Modified version:

1 | func main() { |

Let’s look at an example where Alice and Bob borrow money from each other and try to keep the total amount unchanged. We use one thread each to represent Alice lending money to Bob and Bob lending money to Alice.

1 | func main() { |

Will the above code print a violation? The answer is yes. Because the observation thread may print a violation when someone has lent out money but the other person hasn’t received it yet. This problem can be understood from the following two angles:

- Atomicity. Lending and borrowing should be an atomic operation, so the entire process needs to be wrapped with a lock. Otherwise, if observed at some intermediate moment, inconsistency will occur: the money has been lent out but not yet received, i.e., it’s “in flight”.

- Invariant. Locks can guard invariants: after acquiring the lock and entering the critical section, you may break the invariant (lending money; at this point the money is “in the air”, and observation at this time will show one dollar missing), but restore it before releasing the lock (receiving the money, moving it from “in the air” into another person’s account, keeping the sum of both accounts unchanged).

Therefore, the transaction process needs to be changed to:

1 | go func() { |

Condition

In Raft, there is a scenario where a Candidate asks all Followers for votes, then decides whether to become a Leader based on the collected votes.

1 | func main() { |

This approach has busy waiting, and the continuous locking and unlocking during the loop causes extremely high CPU usage.

A very simple performance improvement is to add time.Sleep(50 * time.Millisecond) in the busy waiting loop, which can greatly reduce CPU usage.

Another more efficient way is to use condition. A condition is bound to a lock. After acquiring the lock, it temporarily suspends the thread via Wait and releases the lock; then another thread can acquire the lock via Lock, and before leaving the critical section, call Broadcast to wake up all threads suspended on Wait for this lock. It similarly uses a signal mechanism to notify among multiple threads about the release and acquisition of locks.

Modified code using Condition:

1 | func main() { |

Note that the usage pattern of Condition requires that both cond.Broadcast and cond.Wait be inside the critical section guarded by the corresponding lock, and after calling cond.Broadcast the lock should be released promptly; otherwise it will cause contention from other threads for an unreleased lock.

1 | mu.Lock() |

In addition, cond.Signal only wakes up one thread that called cond.Wait to enter waiting, while cond.Broadcast wakes up all threads waiting on the corresponding lock. Of course, the former is more efficient.

Channel

Unbuffered channels are usually used as a control mechanism for synchronization among multiple threads.

1 | func main() { |

Using channels within the same thread is meaningless; they are generally used as a communication mechanism among multiple threads (for synchronization or passing messages).

Buffered channels are similar to a synchronous queue, but they are basically not used in this Raft lab. It is recommended to use shared variables + locks for synchronization and mutual exclusion among multiple threads in the lab.

Using channels for synchronization can serve a similar purpose to WaitGroup:

1 | func main() { |

Raft Deadlock

A very important condition for deadlock, loosely speaking, is hold and wait. That is, everyone is holding onto what they have while also wanting what the other has, which naturally forms a deadlock.

A simple principle is to release locks promptly when performing time-consuming tasks (such as RPC, IO).

RPC Reply Staleness

When a Candidate collects vote results, it needs to check whether it is still in the original term and whether it is still a Candidate. Otherwise two leaders may be elected.

1 | if rf.state != Candidate || rf.currentTerm != term { |

However, in fact checking only currentTerm is sufficient, but checking only state is not enough.

Debugging

DPrintf: server number is a very important field; it is recommended to output it at the beginning; then add output statements before and after places of interest.

Deadlock: you can send a SIGQUIT signal to a running Go program via control+\, then the program will exit and print the current stack traces of all threads.

Race conditions: go test -race -run 2A, use -race to have Go print the stack trace when it detects a race. Generally this occurs when shared variables are accessed by multiple threads, and at least one of the access points is not locked.

Glossary

Critical section (strict area): a code segment that multiple threads need to access mutually exclusively.

Busy wait: continuously looping until the condition is satisfied.

References

- Related English notes.

- Related code mentioned.