Imagine you are the CTO of a startup, wanting to quickly launch a database product viable for the AP (analytical processing) market, and it must have differentiated features (otherwise who would use a new product). What would you do?

In 2022, Firebolt published a paper specifically on this topic: Assembling a Query Engine From Spare Parts. The core idea is: assemble a database from open-source components, just like building a desktop PC from off-the-shelf parts.

Author: Woodpecker’s Notes https://www.qtmuniao.com/2023/10/05/firebolt-paper Please indicate the source when reposting

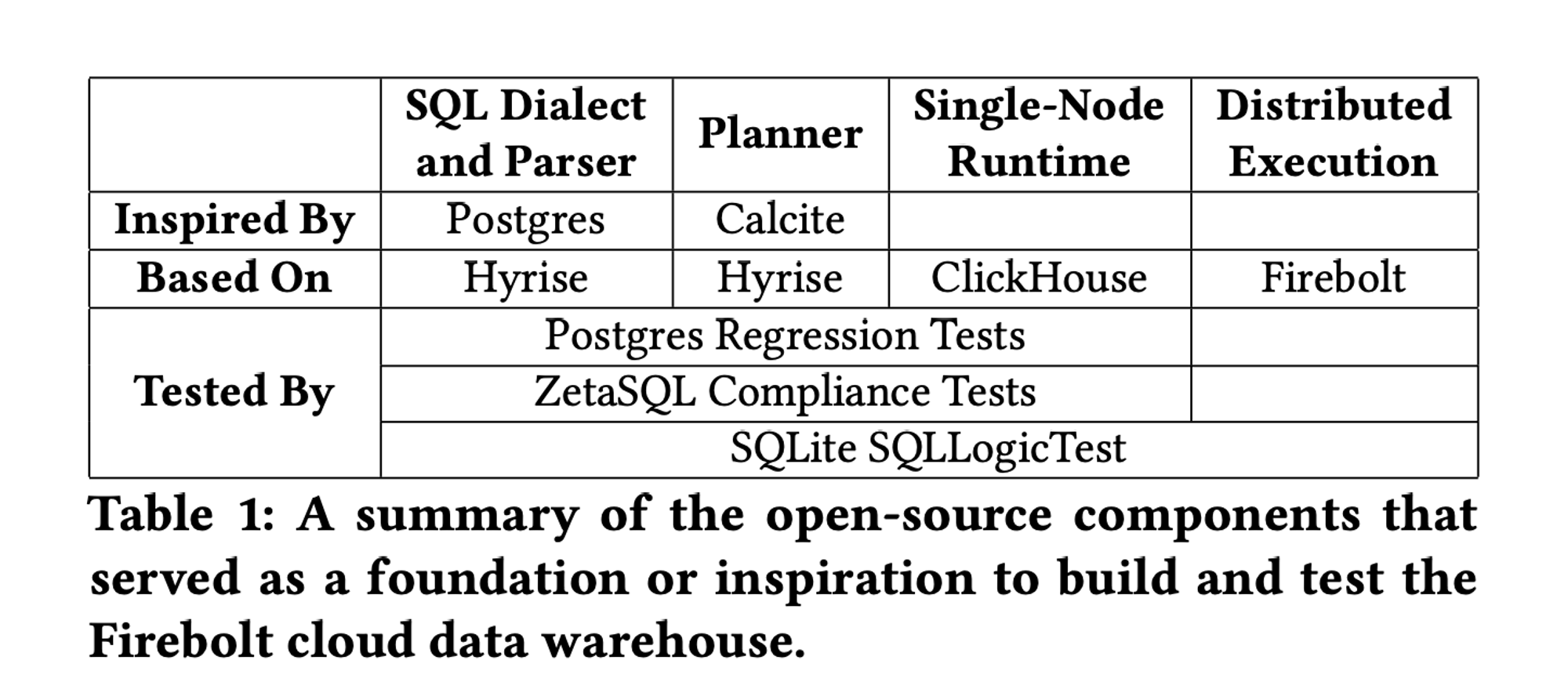

Let’s take a look at the “parts list”:

firebolt.png

firebolt.png

Anyone familiar with database kernel development knows that a database contains many components. Just for the storage layer I work on, there is a long list; you can refer to this article I wrote earlier. Not to mention the biggest part of a database—the query engine. Even after simplifying again and again, you still need a Parser, a Planner, and a Runtime.

Of course, for a database, the most important thing is also the interface it exposes to the outside—SQL. Although there is the ANSI SQL standard, what is actually used in industry are various “dialects.” Although interfaces should be independent of implementation, different implementations inevitably surface and affect the interface. Moreover, distributed databases inevitably have to pick a side and choose a dialect. For example, TiDB chose to be compatible with MySQL, while Firebolt chose to be compatible with Postgres.

Component Selection

Having chosen the compatible SQL dialect, the next step is selecting the important components mentioned above: Parser, Planner, and Runtime. Let’s see how Firebolt made its choices.

Parser & Planner

The Parser performs syntax analysis, tokenizing SQL statements and organizing them into an abstract syntax tree (AST). The Planner optimizes the AST based on rules and cost models into an executable operator tree.

Since the interface between Parser and Planner is the AST, and there is generally no unified standard for ASTs—it is difficult to combine a Parser and a Planner from different projects. Therefore, Firebolt tended to look for a project that included both modules.

Firebolt’s requirements for these two modules were:

- The Parser needed to support most Postgres SQL dialect features, including DDL, DML, DCL, and DQL

- The LogicPlanner needed to support important rules for modern data warehouses, such as predicate pushdown and subquery decorrelation

- The LogicPlanner needed to support extensions for rule-based transformations

- The PhysicalPlanner needed to support cost-based join reordering

- The PhysicalPlanner needed to support custom statistics collection and cost models

- The Planner needed to support complex data types, such as arrays and structs

At that time, there were quite a few open-source projects for these two modules on the market. Let’s list their pros and cons one by one:

| Project | Description | Pros | Cons |

|---|---|---|---|

| Postgres Parser | 1. Naturally compatible with the Postgres SQL dialect 2. libpg_query has already isolated the Parser from other Postgres modules |

1. Stripping the Planner out of the Postgres project requires a lot of work. | |

| Google ZetaSQL | A C++ project from Google, including Parser and Analyzer | 1. Validated in many Google products such as BigQuery, Spanner, Dataflow, Dremel, F1, and Procella 2. Clean project, well-tested, and production-ready |

1. Does not support many Postgres SQL features 2. Only supports simple operator-tree transformations 3. The Planner is also quite simple |

| Apache Calcite | An open-source Java project for query processing and optimization in the data processing field | 1. Supports multiple SQL dialects 2. Good modularity; the Planner supports custom rules 3. Good code quality, well-tested, and widely used (Hive, Storm, Flink, Druid, and MapD) |

1. Not written in C++, making it hard to integrate with other components at the code level |

| CWI Duckdb | An in-memory, embedded analytical database written in C++ | 1. Well-tested and widely used in interactive data analytics scenarios 2. Supports both rule-based and cost-based plan rewriting 3. Uses libpg_query as the baseline for its Parser, so it is very compatible with the Postgres SQL dialect |

1. Still very immature when Firebolt was evaluating options |

| Hyrise | An in-memory database developed by HPI | 1. Simple code base 2. Supports both rule-based and cost-based plan rewriting |

1. It is an academic project 2. Not sufficiently tested, and SQL syntax coverage is also lacking |

In the end, after various considerations, Firebolt chose Hyrise:

- Written in C++

- Supports both rule-based and cost-based plan rewriting

- Simple code base, easy to refactor

Drawing on Calcite’s design and conceptual framework, Firebolt performed extensive modifications, such as adding support for complex types and changing the representation of logical execution plans, among other things.

Runtime

The Runtime is the component that executes the optimized query plan, and it has a critical impact on database performance. At the time, as a small startup, Firebolt still chose to use an open-source project.

There are two implementation approaches for Runtime—vectorization and code generation. The latter may be more efficient, but it has higher implementation complexity and requires too much R&D investment.

To summarize, Firebolt’s requirements for a Runtime project were:

- Support vectorized execution

- Have a certain degree of extensibility to allow for distributed data processing modifications

- Since the Runtime and storage engine are tightly coupled, the project should ideally also implement an efficient columnar storage engine

Compared to the variety of choices for Parser and Planner, there were relatively fewer options for Runtime (at the time, Facebook Velox was not yet open-sourced either). In the end, Firebolt chose ClickHouse:

- It is a vectorized execution engine

- It is well-tested

- It has its own columnar storage format—MergeTree—which supports efficient data pruning

Stitching Planner and Runtime Together

Since the format required by ClickHouse’s execution engine and the output format of Hyrise are not the same—that is, the logical query plan tree (LQP) produced by the latter cannot be directly executed by the former—Firebolt came up with a hacky solution: backtranslating the LQP back into ClickHouse SQL.

This approach could work, but it was not efficient and would lose some optimization information. Later, Firebolt replaced it, directly translating the LQP into a multi-stage distributed execution plan (similar to the multi-stage division during Spark execution), and used protobuf for serialization and deserialization to pass it to each execution node for execution. Of course, this also required some modifications to ClickHouse.

Distributed Execution

Although ClickHouse itself supports distributed execution for certain queries, such as selective table scans, distributed aggregation, broadcast-based joins, and so on. However, for some more common SQL patterns in data warehouses, ClickHouse cannot execute them in a distributed manner very well. For example, joining two large tables, high-cardinality grouped aggregation, distributed sorting, and so on.

To address this, Firebolt implemented its own distributed execution framework, splitting the execution plan into different stages at shuffle operators.

Summary

This is how Firebolt, in its early days as a small startup with very few people, rapidly assembled a commercially viable data warehouse project in eighteen months, thereby laying the foundation for raising a large amount of funding later on. This also reflects, from another angle, the prosperity of the current open-source database ecosystem.

Of course, to make it commercially viable, many aspects still needed polishing, the most important of which was test coverage. I won’t go into details here; those interested can read the original paper.

This article comes from my paid column on Xiaobotong, Daily Records of Systems, which focuses on the most fundamental direction of the Internet—large-scale data systems. It includes series on graph databases, code deep dives, high-quality English podcast translations, database learning, paper reading, and more. Friends who enjoy my articles are welcome to subscribe to the 👉column to support me. Your support is very important for my continued creation of high-quality articles.