6.824-schedule.png

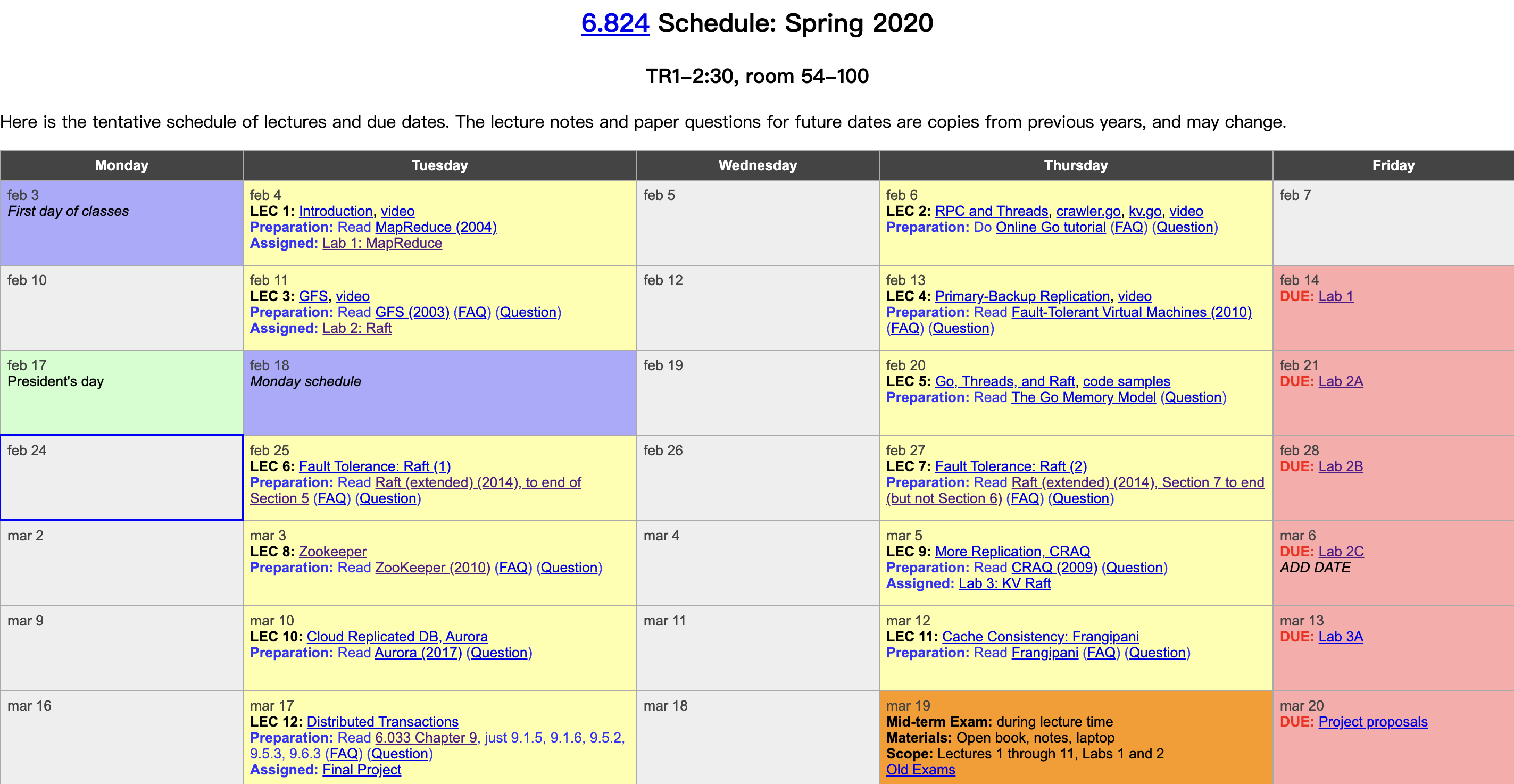

MIT finally released lecture videos on YouTube this year. I had followed about half of this course before, and this year I plan to watch the videos and take notes. The course is structured around distributed systems fundamentals—fault tolerance, replication, and consistency—and uses carefully selected industrial-grade system papers as its backbone, supplemented with extensive reading materials and well-designed labs, bridging academic theory and industrial practice. It is truly an exceptional course on distributed systems. Course videos: YouTube, Bilibili. Course materials: 6.824 homepage. This post covers the first lecture: Introduction.

Course Background

Reasons for building distributed systems:

- Parallelism: harnessing resources in parallel (improving efficiency).

- Fault tolerance.

- Physical: inherent physical distribution of the system.

- Security: untrusted counterparts (blockchain).

Challenges faced by distributed systems:

- Concurrency: many components, complex parallelism, intricate interactions.

- Partial failure: partial failures exist, unlike single machines that either run normally or crash completely.

- Performance: careful design is required to achieve performance linearly proportional to the number of machines.

Author: Muniao’s Notes https://www.qtmuniao.com/2020/02/29/6-824-video-notes-1/, please credit when reposting

Course Components

- Lectures: instruction and some case studies.

- Papers:

- Includes both classic and cutting-edge, academic and industrial works.

- Understand their ideas, learn their implementations, and evaluate their performance.

- Focus on important parts, skip secondary details.

- All paper links are on the course homepage.

- Exams: a midterm and a final.

- Labs: four labs

- Lab 1: MapReduce

- Lab 2: Raft fault tolerance

- Lab 3: K/V server using Raft

- Lab 4: Shared K/V based on Lab 3

Distributed systems are notoriously hard to debug—be mentally prepared and start early.

- Project: you can choose your own topic and work in a team to replace Lab 4.

Course Content

This course aims to learn the abstractions that underpin application infrastructure, including:

- Storage: a straightforward and commonly used abstraction; how to build multi-replica, fault-tolerant, high-performance distributed storage systems.

- Communication: how to communicate reliably.

- Computation: modern large-scale computation, such as MapReduce.

The ultimate goal is to provide a general interface similar to that of a single machine, shielding applications from the details of distribution, while achieving both fault tolerance and performance.

What implementations do we have for these abstractions?

- RPC: communicate across nodes as if making a local call.

- Concurrency, Threads: vehicles for concurrency.

- Concurrency, Locks: concurrency control.

Performance

Scalability:

- Resources can be aggregated linearly: using twice as many machines yields twice the throughput.

- This means when you hit a bottleneck, you only need to spend a small amount of money on machines rather than paying high salaries to programmers for refactoring.

- But this property is very hard to achieve. Usually, when you scale one component, the bottleneck shifts to another; infinite scaling of all components is difficult.

Fault Tolerance

Single machines are great, but for a cluster of thousands of machines, failures are the norm. For example:

- Host crashes

- Network jitter

- Switch failures

Availability.

Recoverability: automatic recovery without human intervention, without affecting correctness.

Means:

NV storage: persistence.

Replication: multiple copies.

Consistency

Factors causing inconsistency in distributed systems:

- Caching.

- Replication.

Different levels of consistency:

-

Strong consistency: every client can always read data previously written (by themselves or others). Achieving strong consistency in a multi-replica system is extremely costly and requires a great deal of communication. Briefly, there are two methods:

- Write to all replicas on every change.

- Read from all replicas on every read, using the data with the latest timestamp.

-

Weak consistency: for the sake of performance, industrial systems usually choose weak consistency.

MapReduce

Background

Around 2003, Google was facing massive (tens of terabytes) index data and web-scale structured data, needing to find the most important web pages. This can be simplified to a sorting problem, but sorting at this scale is not an option for a single machine. And not all engineers have the ability to hand-craft distributed systems, so there was a need for a distributed framework that shields application programmers from the details of the distributed environment:

- How to efficiently distribute work across thousands of machines.

- How to control data movement.

- How to handle fault tolerance.

And so on.

How It Works

Using WordCount as an example:

Map: document -> (word, 1)

Shuffle: group by word on the Map machine, send each key range to the corresponding Reduce machine.

Reduce: List(word, 1) -> (word, count)

Terminology

Job: Job

Task: Task, divided into Map Task and Reduce Task.

Worker node: worker server

Worker process: worker process

Master node: master server

Storage Coordination

To better support parallel reads and writes, a network file system is needed to coordinate input and output—this is GFS (Google File System).

GFS can be simply understood as a network file system that splits large files into small 64 MB blocks and distributes them across different machines.

Network Overhead

To avoid the main bottleneck at the time (network transfer), Google made a series of optimizations, including running GFS and MR on the same cluster to reduce network transfer for reading and writing data. The specific approach was to let Map tasks go to the data (blocks)—scheduling tasks to the machines where their input resides. But for Reduce tasks, there will always be significant network overhead: GFS replicates data redundantly, meaning each result has to be written multiple times.

However, modern data centers can greatly improve network transfer speeds through many means, such as using multiple root routers to distribute traffic, meaning there can be more flexibility in design without having to optimize too much for network transfer.