6.824-schedule.png

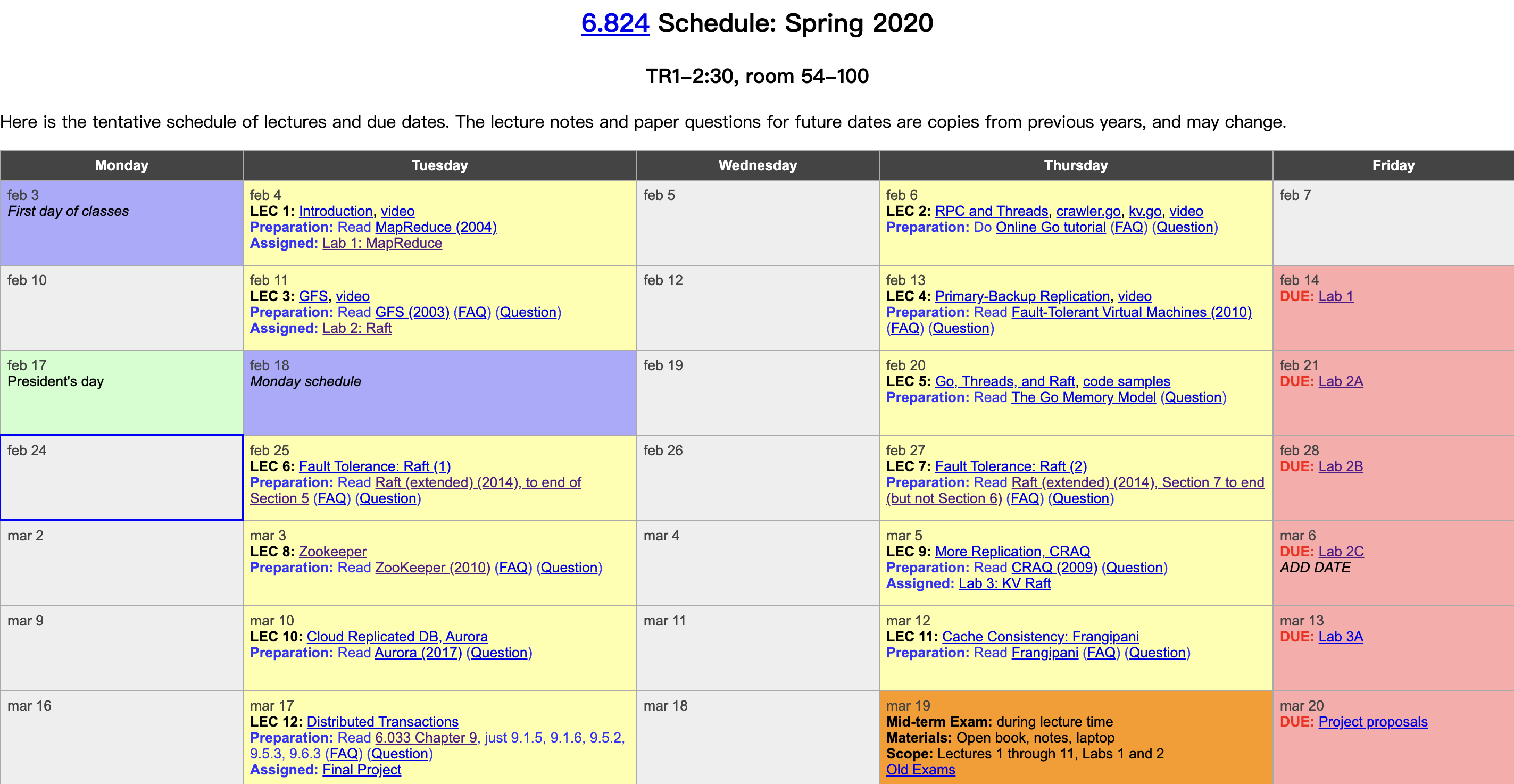

MIT has finally released in-class video materials on YouTube this year. I followed about half of this course before, and this year I plan to watch the videos and write some lecture notes. The course takes distributed fundamentals—fault tolerance, replication, and consistency—as its main thread, uses carefully selected industrial-strength system papers as its backbone, and fills in detailed reading materials and well-crafted labs, bridging academic theory and industrial practice. It is truly a rare and excellent course on distributed systems. Course videos: Youtube, Bilibili. Course materials: 6.824 homepage. This post is the lecture notes for the third class, GFS.

Overview

Storage is a very critical abstraction with a wide range of uses.

The GFS paper also touches on many issues related to fault tolerance, replication, and consistency.

GFS itself is a very successful production system inside Google. Its key ideas were well organized into a single academic paper, covering many issues from hardware to software, and is well worth studying.

For a detailed understanding of GFS, you can also check out my earlier GFS paper notes.

Author: 木鸟杂记 https://www.qtmuniao.com/2020/03/14/6-824-vidoe-notes-3-gfs/, please indicate the source when reposting

Why It’s Hard

-

High Performance --> Sharding

In a distributed system, the natural desire is to use a large number of machines to provide proportional performance, so data is usually scattered across different machines for parallel access. We call this Sharding. But the more shards there are, the higher the failure rate.

-

Faults --> Fault Tolerance

With more failures comes the need for automatic fault tolerance. The simplest, most direct, and usually most effective method of fault tolerance is Replication. If replicas are mutable, they need to be synchronized periodically, which leads to the issue of consistency.

-

Replication --> Consistency

Of course, through careful design, system consistency can be maintained, but this means you have to sacrifice performance.

-

Consistency --> Low Performance

This is somewhat similar to proof by contradiction, ultimately deriving a contradiction that illustrates the difficulty of building a distributed storage system. In practice, under a given scenario, we have more room for trade-offs, making it possible to design a reasonable system.

Consistency

Strong Consistency

That is, even though there are many replicas and machines in the storage system, the externally observable behavior is like a single machine: all clients can read what other clients have previously written. This behavior, or guarantee, seems simple and natural, but in a distributed environment, it is by no means easy. For those who want to learn more about this, you can check out a classic article on CAP that I translated here.

Bad Design

In order to keep all replicas consistent, synchronization can be done on the client side: every write operation is sent to multiple replicas in parallel. Each replica server may receive the write operations in a different order, resulting in inconsistency among replicas.

GFS

Before Google’s three famous papers (MapReduce, GFS, Bigtable) came out, most distributed theories remained in academia. Because Google faced the need to process, store, and access massive amounts of data (YouTube videos, web indexes, etc.), it was among the earliest to develop practical large-scale distributed frameworks.

Characteristics

- Big, Fast: rapid access to massive amounts of data

- Global: data access and sharing across different sites

- Sharding: concurrent access by multiple clients to increase throughput

- Auto Recovery: with so many machines, automated operations are a must

However, going forward, we will only discuss GFS with the following constraints:

- Deployed in a single datacenter

- For internal use only, without much consideration for security

- Sequential reads and writes of large data, rather than random access

The value of GFS lies in the fact that it is an industrial-strength system tested by real-world practice and deployed on thousands of machines, overturning many classic design assumptions in academia, such as:

- To ensure error-free data access, strong consistency guarantees must be provided (GFS only provides a form of weak consistency)

- For system reliability, multiple machines are used to ensure the reliability of the master node (GFS uses a single Master)

System Roles

Clients: The client, which accesses the system through an API.

Master: Stores the namespace and metadata.

ChunkServer: Storage nodes.

Master Data Structures

Master data:

There are mainly two maps:

-

File name to array of chunk handles (nv)

-

Chunk handle to chunk metadata (including replica locations, chunk version number, primary chunk, lease expiration time): chunk handle —> list of chunk servers(v)/version(nv)/ Primary(v) / lease expire time(v)

Both data structures are stored in memory (RAM). However, for crash recovery, some information (marked nv: non-volatile) needs to be written to disk, that is:

-

For reads, simply read from memory.

-

For writes, modify memory while simultaneously recording an operation log (LOG) + snapshot (CheckPoint) on disk.

For other information (marked v: volatile), it can be reconstructed from heartbeats sent by chunkservers.

Using a log rather than a database (DB) to record operation information is because the former is faster on disk. But if there are too many operations, recovery will be slow. Can we compress it? Hence the snapshot: the in-memory state corresponding to the operation log is captured in some format (e.g., B-tree) as a snapshot. The two are combined: historical information is stored as a snapshot, and recent information is stored as an operation log. This improves space utilization and reduces operation latency.

Read/Write Flow

READS:

- File name, offset --request–> Master

- Master --response–> Chunk handle, list of Chunk replica addresses (Client caches this information)

- The client requests data from the chunk server hosting a certain replica (e.g., the physically closest one), and the chunk server returns the corresponding data.

Q&A:

- What if the data to be accessed spans chunks? GFS provides a client library that automatically splits it into multiple requests. The client does not need to worry about these details.

WRITES:

Here we only discuss Record Appends, which fall into two cases:

- No Primary on Master

- Find all up-to-date replicas (i.e., those with a version number greater than or equal to the latest version known by the Master)

- The Master selects one of them as the Primary, and the others become Secondaries

- The Master increments the version number

- The Master syncs the new version number to all primary and secondary replicas; at the same time, it grants a lease to the Primary

- The Master persists the version number

- Master has Primary information

- The Primary selects an offset (since appends may be concurrent, the Primary is responsible for serializing concurrent appends into a write order, i.e., assigning a different offset to each append)

- All replicas are notified to write data at that offset

- If all replicas reply to the Primary that the write succeeded, the Primary replies to the Client that the write succeeded

- If any replica fails to write, the Primary replies to the Client that the write failed. The client library will automatically retry the entire Append process.

Q&A:

- If the Client write fails, inconsistent regions may eventually exist across different replicas (some writes succeeded, some failed). However, as long as the write eventually succeeds, the data at the returned offset is guaranteed to be consistent across all replicas. The inconsistent regions caused by intermediate write failures will be skipped during reads.

- When syncing data, the Client only sends data to the closest replica, which then forwards it to the other replicas. This chain synchronization avoids switch bandwidth bottlenecks.

- The Master only updates the version number when it believes the requested chunk has no Primary. If it can find the Primary address in its in-memory table, it returns it directly to the Client.

- When a network partition occurs, the Primary and Client may still be connected, but the Primary is disconnected from the Master. When the lease expires, if the Master has not received a heartbeat from the Primary (the Primary renews its lease by sending heartbeats to the Master), the Master will consider the Primary dead and select a new Primary. At this point, split brain can occur. This situation is rather tricky. One solution is for the Master to wait until the old Primary’s lease expires (the old Primary also knows its own lease expiration time and will automatically lose its Primary identity if it cannot renew normally) before selecting a new Primary.

- What if the file to be appended to does not yet exist? The Master initializes a version number, then randomly selects a Primary and several secondaries, and replies to the Client.

Some Issues

To achieve consistency, a protocol similar to two-phase commit may be needed, but this will undoubtedly reduce performance.

As the data volume grows, metadata increases, and the Master’s memory may no longer be sufficient—this is the single-point bottleneck problem of GFS.

Master recovery requires manual intervention, causing system downtime recovery to potentially take tens of minutes.