6.824-schedule.png

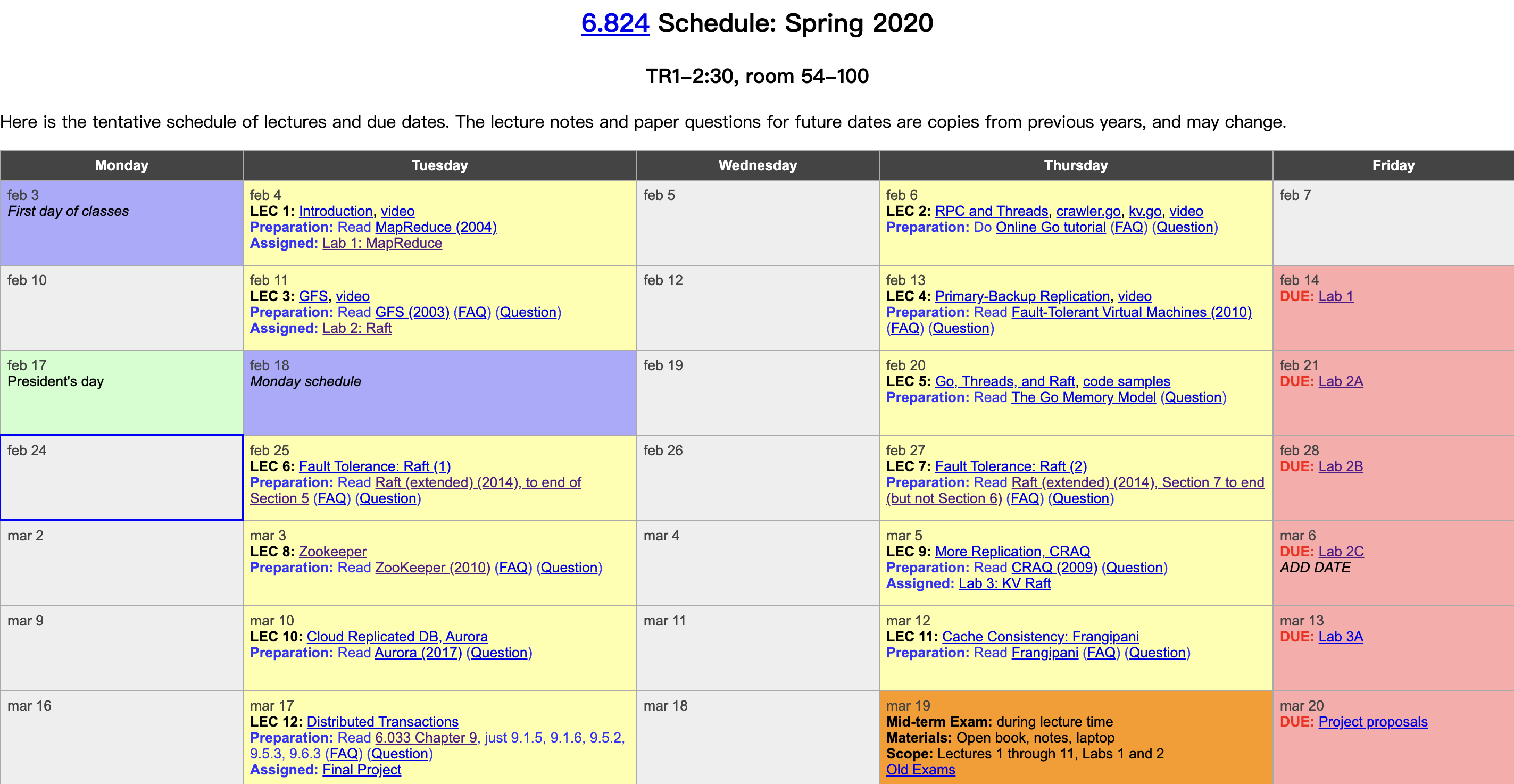

MIT finally released the lecture videos on YouTube this year. I had followed about half of this course before, and this year I plan to watch through the videos and take some lecture notes. The course is structured around the fundamentals of distributed systems—fault tolerance, replication, and consistency—using carefully selected industrial-grade system papers as the backbone, supplemented with detailed reading materials and well-crafted labs, bridging academic theory and industrial practice. It is truly an excellent distributed systems course. Course videos: YouTube, Bilibili. Course materials: 6.824 homepage. This post is the notes for the second lecture, RPC and threads.

Why Go

- Modern syntax. It has built-in language-level support for threads (goroutines) and channels. It also has good support for locking and synchronization between threads.

- Type safe. Memory safe, making it hard to write bugs like out-of-bounds memory accesses as in C++.

- Garbage collection (GC). No need for manual memory management, which is especially important in multi-threaded programming, since it is easy to reference some memory and then forget where it was referenced.

- Concise and intuitive. Not as many complex language features as C++, and it is very friendly with error messages.

Author: 木鸟杂记 https://www.qtmuniao.com/2020/02/29/6-824-video-notes-2/, please indicate the source when reposting

Threads

Why are threads so important? Because they are our primary means of controlling concurrency, and concurrency is the foundation of building distributed systems. In Go, you can think of a goroutine as a thread—the two terms are used interchangeably below. Each thread can have its own memory stack and registers, but they can share a single address space.

Reasons for Use

IO concurrency: A historical term; in the single-core era, IO was the main bottleneck. To make full use of the CPU, when one thread is performing IO, it can yield the CPU so that another thread can perform computation, read, or send network messages, etc. Here, it can be understood as: you can use multiple threads to send multiple network requests in parallel (such as RPC, HTTP, etc.), and then wait for their responses separately.

Parallelism: Make full use of multi-core CPUs.

Regarding the difference and relationship between concurrency and parallelism, you can read this article. Just remember two keywords: logical concurrent design vs. physical parallel execution.

Convenience: For example, you can start a thread in the background to execute something periodically, or to check something regularly (such as a heartbeat).

Q&A:

- How can concurrency be handled without using threads? Event-driven asynchronous programming. However, the multi-threaded model is easier to understand, since the execution order inside each thread is roughly consistent with your code order.

- What is the difference between a process and a thread? A process is an abstraction provided by the operating system that includes an independent address space. A Go program starts as a process and can launch many threads (though I recall that goroutines are user-space execution flows).

Challenges

Shared memory is error-prone. A classic problem is that when multiple threads execute the statement n = n + 1 in parallel, since this operation is not atomic, without locking, it is easy for n to end up with an unexpected value.

We call this situation a race: two or more threads simultaneously trying to modify a shared variable.

The solution is to add locks, but how to add locks scientifically to balance performance and avoid deadlocks is another subject.

Q&A:

- Does Go know the mapping between locks and resources (some shared variables)? Go does not know; it simply waits for the lock, acquires the lock, and releases the lock. Programmers need to maintain this themselves in their minds and logic.

- Does Go lock all variables of an object or only some? Same as the previous question—Go does not know any relationship between locks and variables. The primitive of

Lockitself is very simple: when goroutine0 callsmu.Lock, if no other goroutine holds the lock, goroutine0 acquires it; if another goroutine holds the lock, it waits until the lock is released. Of course, in some languages, such as Java, objects or instances are bound to locks to indicate the scope of the lock. - Should a Lock be a private variable of some object? If possible, it is best to do so. But if there is a need for cross-object locking, it needs to be exposed, while being careful to avoid deadlocks.

Coordination

- channels: The more recommended approach in Go, available in blocking and buffered forms.

- sync.Cond: A signaling mechanism.

- waitGroup: Blocks until a group of goroutines has finished executing, which will be mentioned later.

Deadlock

Conditions for occurrence: multiple locks, circular dependencies, and hold-and-wait.

If your program stops working but hasn’t crashed, you need to check whether it is deadlocked.

Web Crawler

-

Start from a seed web page URL.

-

Fetch its content text via an HTTP request.

-

Parse all URLs contained in the content, and repeat steps 2 and 3 for all URLs.

To avoid fetching the same page repeatedly, all fetched URLs need to be recorded.

Because:

- The number of web pages is huge.

- Network requests are slow.

Fetching one by one takes too long, so parallel fetching is needed. The difficulty here is how to determine that all web pages have been fetched and that crawling should end.

Crawler Code

The code is available in the reading materials.

Serial crawling. Perform a depth-first traversal (DFS) of the graph structure formed by all web pages, using a set named fetched to store all URLs that have already been crawled.

1 | func Serial(url string, fetcher Fetcher, fetched map[string]bool) { |

Concurrent crawling

- Turn the fetching part into a parallel operation using the

gokeyword. But if only this change is made, without using some mechanism (such assync.WaitGroup) to wait for child goroutines before returning, then it may only crawl the seed URL, while also causing child goroutines to leak. - If accessing the already-fetched URL set

fetchedwithout a lock, it is very likely to fetch the same page multiple times.

WaitGroup

1 | var done sync.WaitGroup |

WaitGroup internally maintains a counter: calling wg.Add(n) increases it by n; calling wg.Done() decreases it by 1. Calling wg.Wait() will block until the counter reaches 0. Therefore, WaitGroup is well suited for scenarios where you need to wait for a group of goroutines to finish.

Q&A

-

What if a goroutine exits abnormally without calling

wg.Done()? You can usedeferat the beginning of the goroutine:defer wg.Done(). -

If two goroutines call

wg.Done()at the same time, could there be a race so that the internal counter is not decremented correctly twice? WaitGroup should have corresponding mechanisms (such as locks) to ensure the atomicity ofDone(). -

What is the relationship between variables in an anonymous function and variables of the same name in the outer function? This is a closure issue. If a variable in the anonymous function is not overridden by a parameter (such as

fetcherin the code above), it will refer to the same address as the variable of the same name in the outer scope. If it is passed via a parameter (such asuin the code above), even if the parameter looks the same as the outer variable, the anonymous function uses the passed-in parameter, not the outer variable. Especially for loop variables, we usually pass them via parameters to make a copy at the time of the call; otherwise, all goroutines started by theforloop will point to this variable that is constantly being reassigned by the loop.For closures, Go has a concept called “Variable Escape”: if a variable is still referenced after the function’s lifecycle ends, it is allocated on the heap rather than the function stack. For closures, if a variable is referenced by both the inner and outer functions, it will be allocated on the heap.

-

Since the string

uis immutable, why do all goroutines still reference a constantly changing value? A string is indeed immutable, but the value ofukeeps changing, and the goroutine shares the reference touwith the outer goroutine.

Removing Locks

If you remove the lock when updating the map and run it a few times, you may find nothing abnormal, because races are actually hard to detect. Fortunately, Go provides a race detection tool to help you find potential races: go run -race crawler.go.

Note that this tool does not perform static analysis; instead, it observes and records the execution traces of each goroutine during dynamic execution and analyzes them.

Number of Threads

Q&A

- How many threads (goroutines) are running concurrently during the entire execution of this code?

The code does not impose any obvious limit, but it is clearly positively correlated with the number of URLs and the fetching time. In the example, the input contains only five URLs, so there is no problem. But in reality, doing this might launch millions of goroutines at the same time. Therefore, one improvement is to start a fixed number of worker goroutines in a pool; each worker asks for or is assigned the next task after finishing its current one.

Communicating via Channels

We can implement a new version of the crawler that does not use locks + shared variables, but instead uses Go’s built-in syntax: channels for communication. The specific approach is similar to implementing a producer-consumer model, using a channel as a message queue.

- Initially, put the seed URL into the channel.

- Consumer: the master continuously takes URLs from the channel, checks whether they have been fetched, and then starts a new worker goroutine to crawl them.

- Producer: the worker goroutine crawls the given task URL and puts the parsed result URLs back into the channel.

- The master uses a variable

nto track the number of outstanding tasks; increment by one when a task is issued; decrement by one when a result is retrieved from the channel and processed (i.e., scheduled to a worker); exit the program when all tasks are finished.

1 | func worker(url string, ch chan []string, fetcher Fetcher) { |

Q&A:

- The master reads from the channel and multiple workers write to the channel—won’t there be a race? Channels are thread-safe.

- Does the channel not need to be closed in the end? We track the number of all executing tasks with

n. Therefore, when exiting atn == 0, there are no tasks/results left in the channel, so neither the master nor the workers hold a reference to the channel, and the GC collector will reclaim it shortly after. - Why does

ConcurrentChannelneed to use a goroutine to write a URL into the channel? Otherwise, the master will block forever when reading. And the channel is an unbuffered channel; if a goroutine is not used, it will block forever on the write.