This article is based on the OSDI 2020 “Virtual Consensus in Delos” paper presentation. It explores the control-plane storage system in distributed systems and proposes a distributed architecture based on layered abstraction. The core idea is to introduce a logical protocol layer, allowing the physical layer to be implemented and migrated on demand—somewhat analogous to how virtual memory relates to physical memory in a single-machine system.

Author: Muniao’s Notes https://www.qtmuniao.com/2021/03/14/facebook-delos/ , please indicate the source when reposting

Background

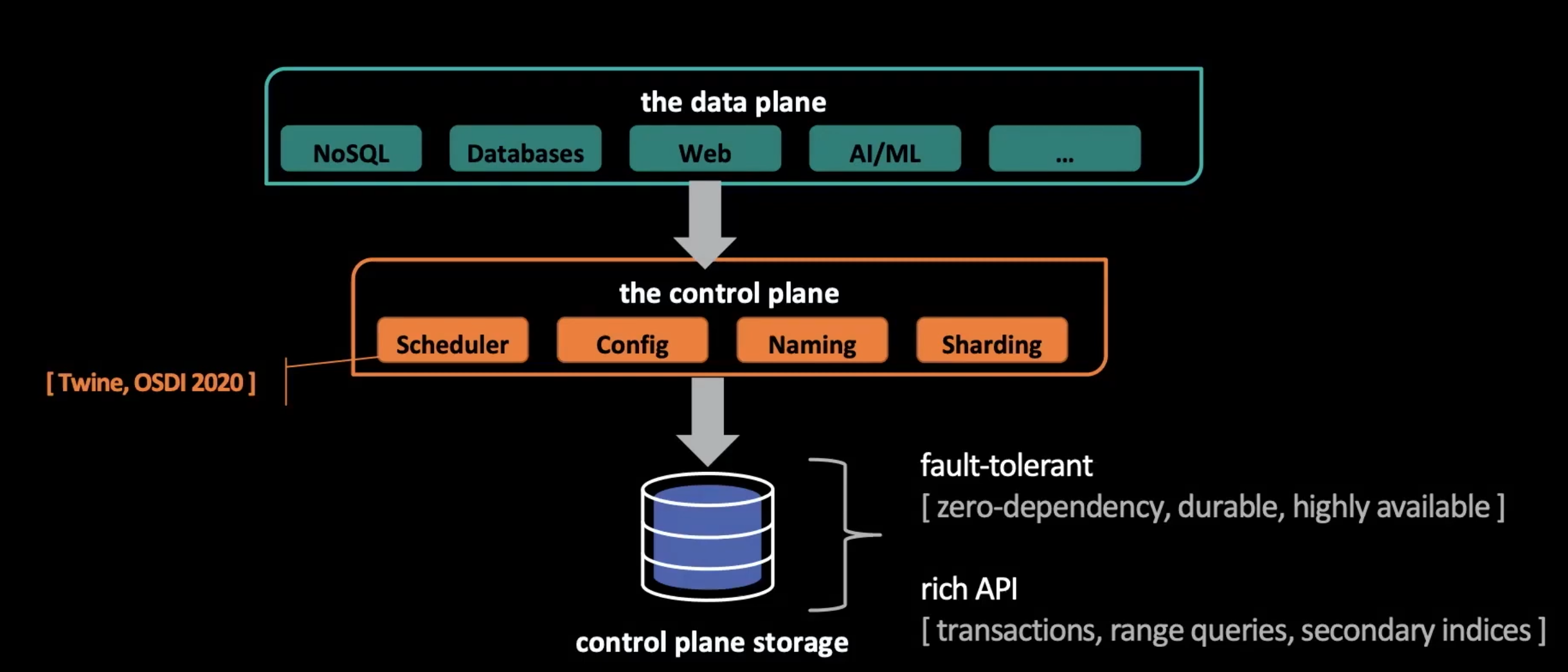

Facebook’s software stack generally consists of two layers: the upper layer is the data plane, and the lower layer is the control plane.

facebook software stack

facebook software stack

The data plane includes a large number of services that need to store and process massive amounts of data. The control plane supports the data plane and performs control functions: scheduling, configuration, naming, sharding, and so on. The control plane is usually stateful; for example, it holds control metadata. To store this metadata, the control plane needs its own storage. The control plane has the following requirements for storage:

- Fault tolerance: zero dependencies, durable, and highly available.

- Rich API: transactions, range queries, and secondary indexes.

In 2017, Facebook used several components as the control plane’s storage, including:

- MySQL: rich API and strong expressiveness, but does not support fault tolerance.

- ZooKeeper: fault-tolerant and zero-dependency, but with a weak API.

As can be seen, neither could well satisfy the control plane’s storage needs at the same time. In addition, as monolithic architectures, these components were difficult to retrofit into systems that simultaneously support fault tolerance and a rich API. Moreover, the team was facing very tight deadlines. The answer they ultimately delivered was — Delos.

Architecture

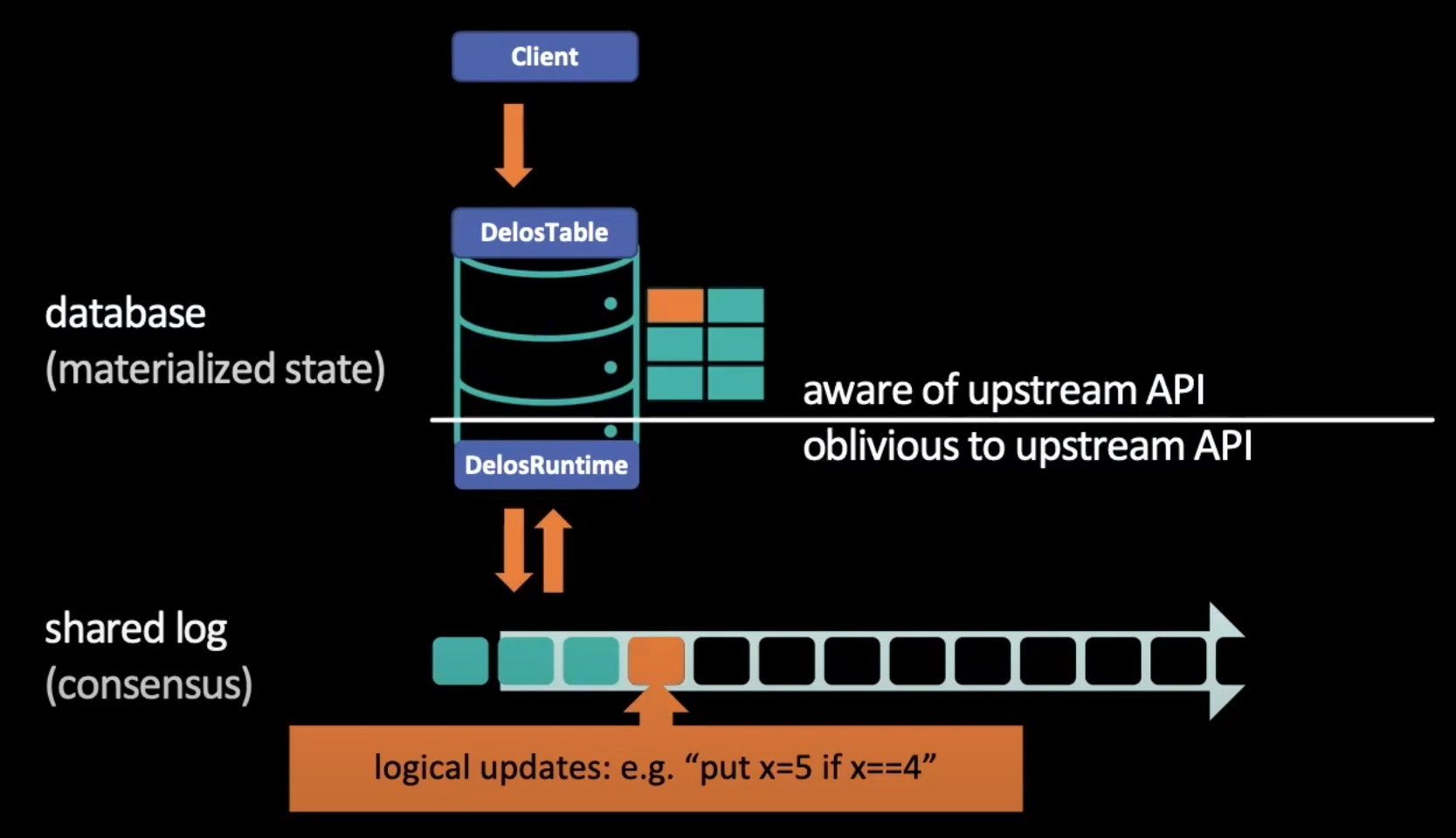

Delos is a control-plane storage system based on a shared log. Database-layer instances interact with the shared log through append and read operations, thereby maintaining consistent external state. According to research over the past few decades, using a shared log as an API can effectively hide a great deal of consensus protocol details from the database layer.

design based sharedlog

design based sharedlog

Read/Write Procedure

read write procedure via shared log

read write procedure via shared log

The storage service can be divided into two layers: the DB API layer and the shared-log runtime layer. As shown in the figure above, taking table storage as an example, at the upper layer, DelosTable is responsible for providing the table storage API; at the lower layer, DelosRuntime is responsible for reading and writing the shared log. A typical write procedure is as follows:

- The client initiates a write request.

- The DelosTable layer forwards it to DelosRuntime.

- DelosRuntime serializes the request and appends it to the shared log.

- Each server detects this append, reads its contents, and applies it to the local state machine in the same order.

In this architecture, there are two key design points:

- The shared-log layer provides a minimal API with linearizability guarantees.

- Based on this concise API, the upper layer can conveniently provide implementations of different storage interfaces.

Virtual Consensus

So far, the architecture design looks quite simple, but we know that complexity can only be shifted, not disappear into thin air. The most complex consensus protocol is hidden behind the shared log, so the question arises:

- How can we quickly implement a shared-log component that satisfies the consensus protocol?

- As technology evolves, if we later want to use a better consensus protocol, how can we replace it?

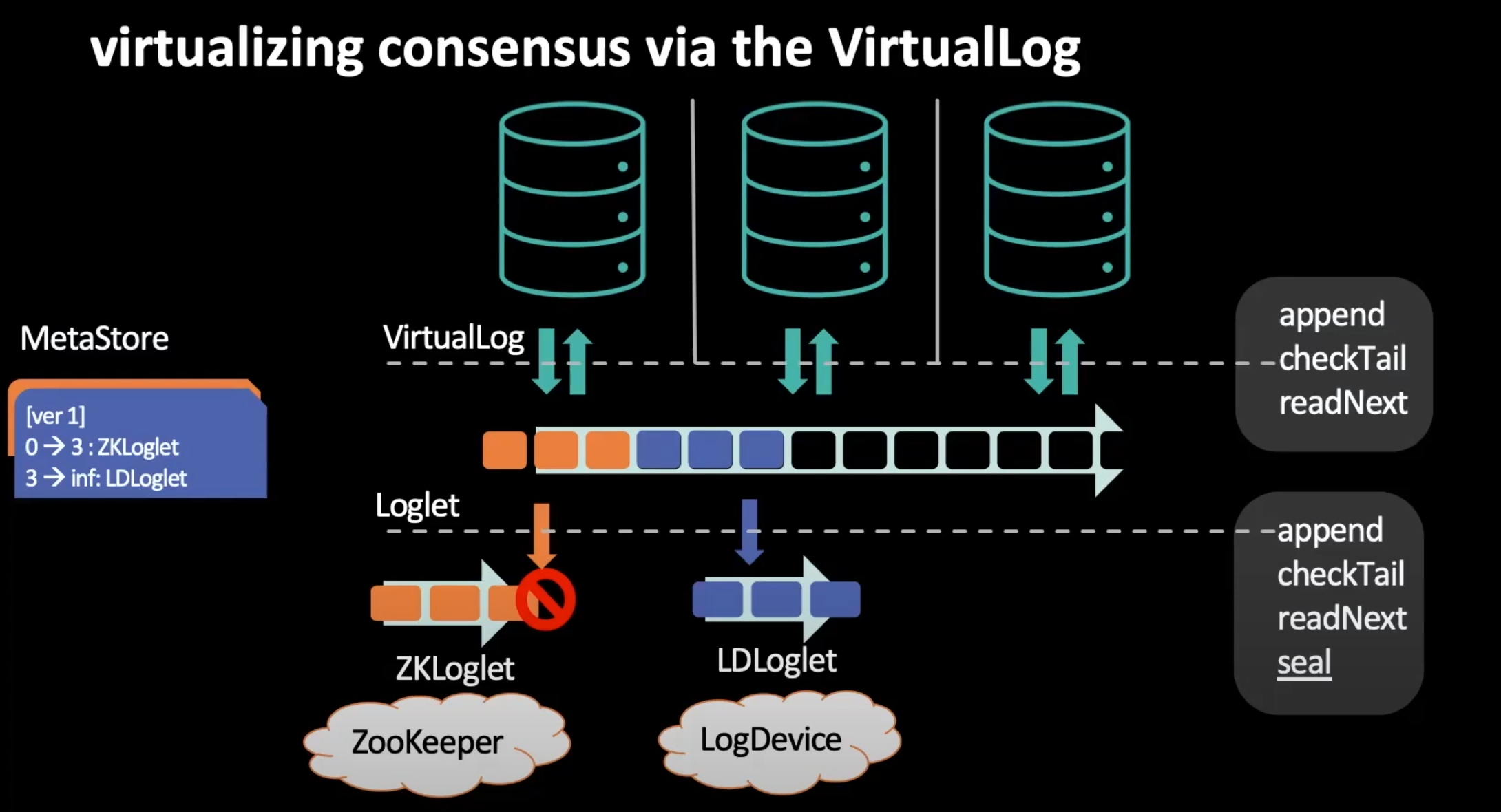

To solve these problems, Delos proposes Virtual Consensus. By abstracting a virtual consensus protocol layer, Delos’s shared-log component can quickly reuse existing implementations, such as ZooKeeper; later, to improve performance, it can also use this layer to migrate the underlying implementation without downtime.

In Delos, the carrier layer of the virtual consensus protocol is called VirtualLog. For the upper layer, the DB layer is implemented based on the VirtualLog layer; for the lower layer, VirtualLog is mapped to a set of physical shared logs called Loglets. Each Loglet provides the same API as the shared log, plus a seal command. Once sealed, a Loglet no longer accepts appends. To store the mapping from VirtualLog logical space to Loglets physical space, Delos introduces a new component: MetaStore.

MetaStore is a versioned simple KV store. By storing different versions of Loglet switching, VirtualLog naturally directs traffic to the new Loglet. The figure below shows the process in which VirtualLog puts a new version (ver0 -> ver1) of mapping information into MetaStore, switching traffic without downtime from ZooKeeper to LogDevice.

virtualizing consensus via the VirtualLog

virtualizing consensus via the VirtualLog

Custom Loglet

After meeting basic requirements, to further improve performance, Delos wanted to customize its own Loglet with the following characteristics:

- Simple

- Fast

- Fault tolerant

NativeLoglet

Achieving any two of these is relatively easy; achieving all three is somewhat difficult. Delos uses a divide-and-conquer strategy, decomposing it into two components:

- MetaStore: responsible for fault tolerance.

- Loglet: focused on performance.

At this point, all sources of consistency are moved onto MetaStore. MetaStore has relatively simple functionality: it only needs to save the space mapping and provide a fault-tolerant reconfiguration primitive (i.e., operating on the mapping, such as Loglet switching), and reconfiguration is a low-frequency operation. Therefore, the MetaStore implementation does not need to focus on performance optimization; it only needs to be implemented according to Lamport’s original Paxos, which ensures the simplicity of the MetaStore implementation.

At the same time, the Loglet’s responsibilities are weakened: it no longer needs to provide full fault tolerance, only a highly available seal command. Thus, when a Loglet becomes unavailable, VirtualLog only needs to seal it and then switch traffic to another Loglet.

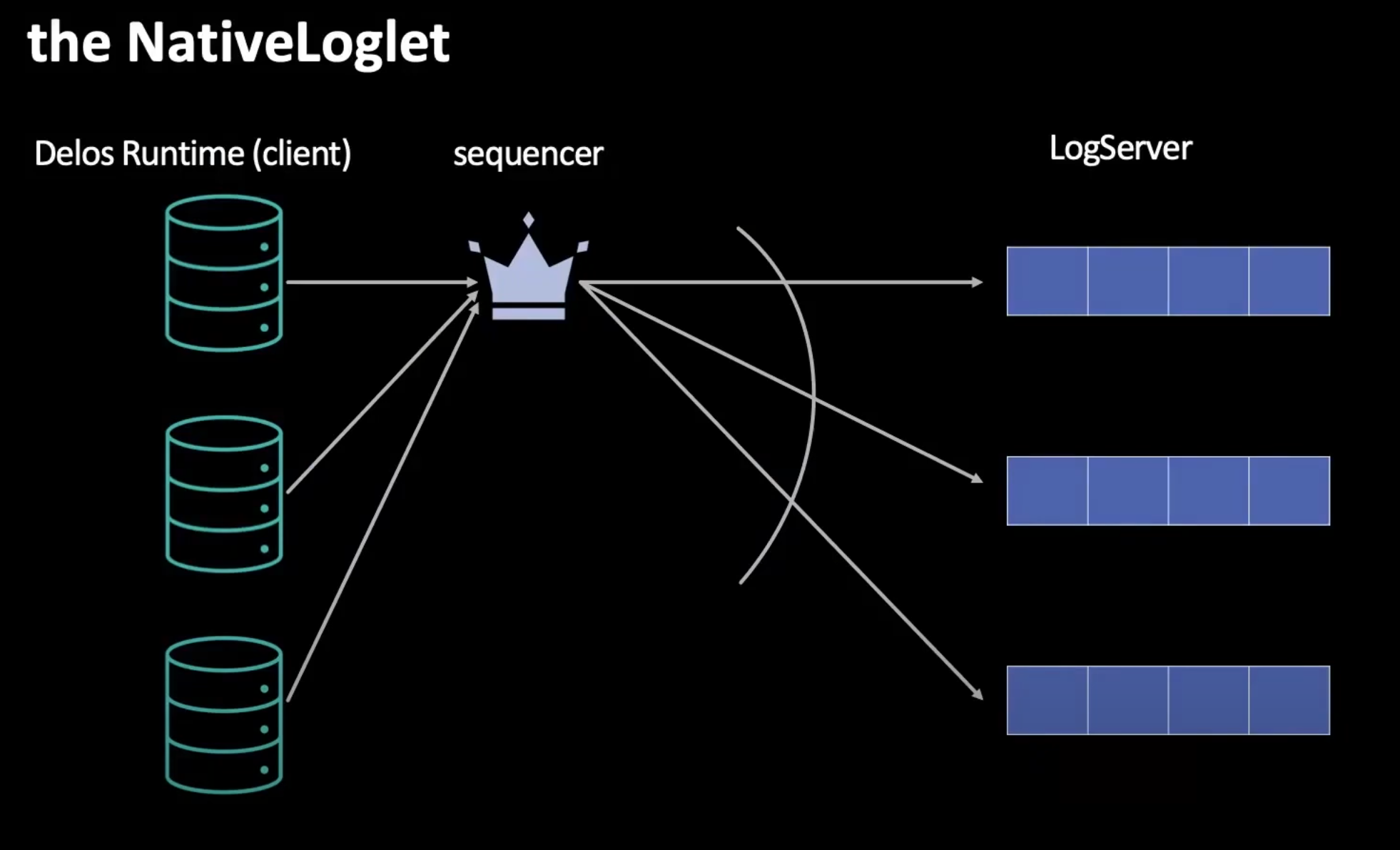

Based on this, Delos implemented a new Loglet instance — NativeLoglet.

the NativeLoglet

the NativeLoglet

Intuitively, NativeLoglet is somewhat like a weakened version of LogDevice. Its interaction procedure is as follows:

- During normal operation, a certain LogServer in the cluster acts as the Sequencer.

- All Append requests issued by DelosRuntime must be sequenced by the Sequencer and then appended to each LogServer.

- When the LogServer hosting the Sequencer crashes, DelosRuntime directly sends CheckTail requests to all LogServers to determine the tail via a quorum protocol.

- Any DelosRuntime can initiate a seal request to seal the NativeLoglet.

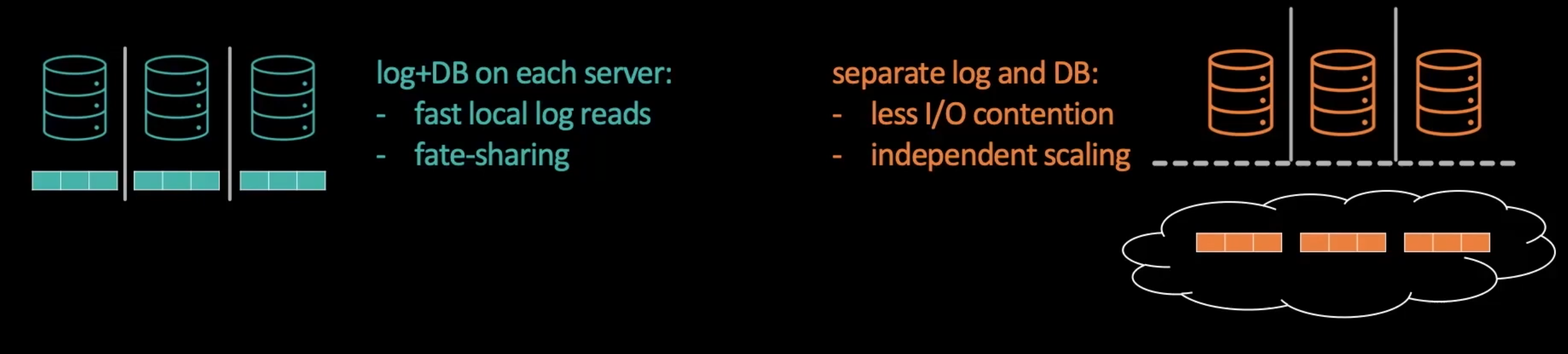

Note that in NativeLoglet, all LogServers can be co-located with DelosRuntimes (called Converged mode) or deployed separately (called Disaggregated mode). The former can achieve better local read performance and bind the lifecycle of database instances and log instances together. The latter separates the database layer from the log layer, avoiding resource contention between different layers and allowing each to scale independently on demand.

converged vs disaggregated

converged vs disaggregated

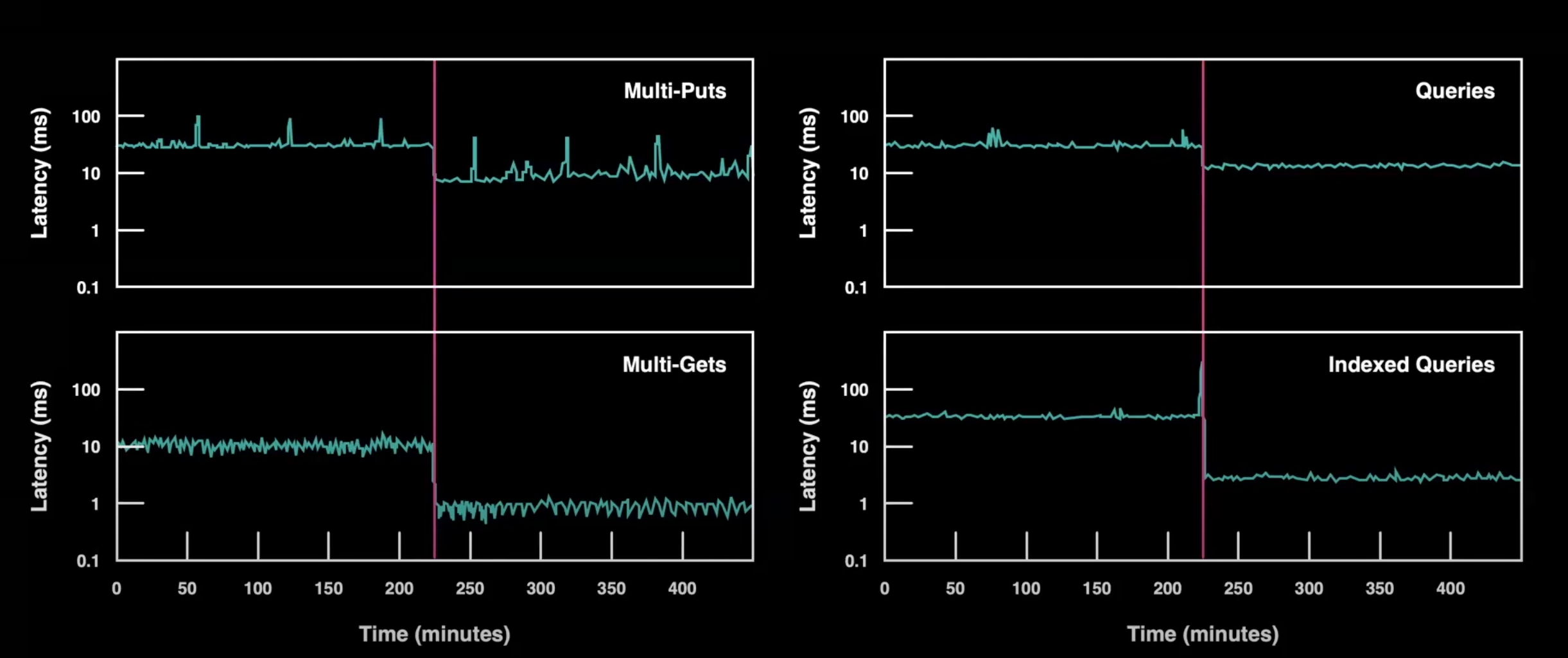

The figure below is a performance improvement comparison after replacing with NativeLoglet:

NativeLoglet compare

NativeLoglet compare

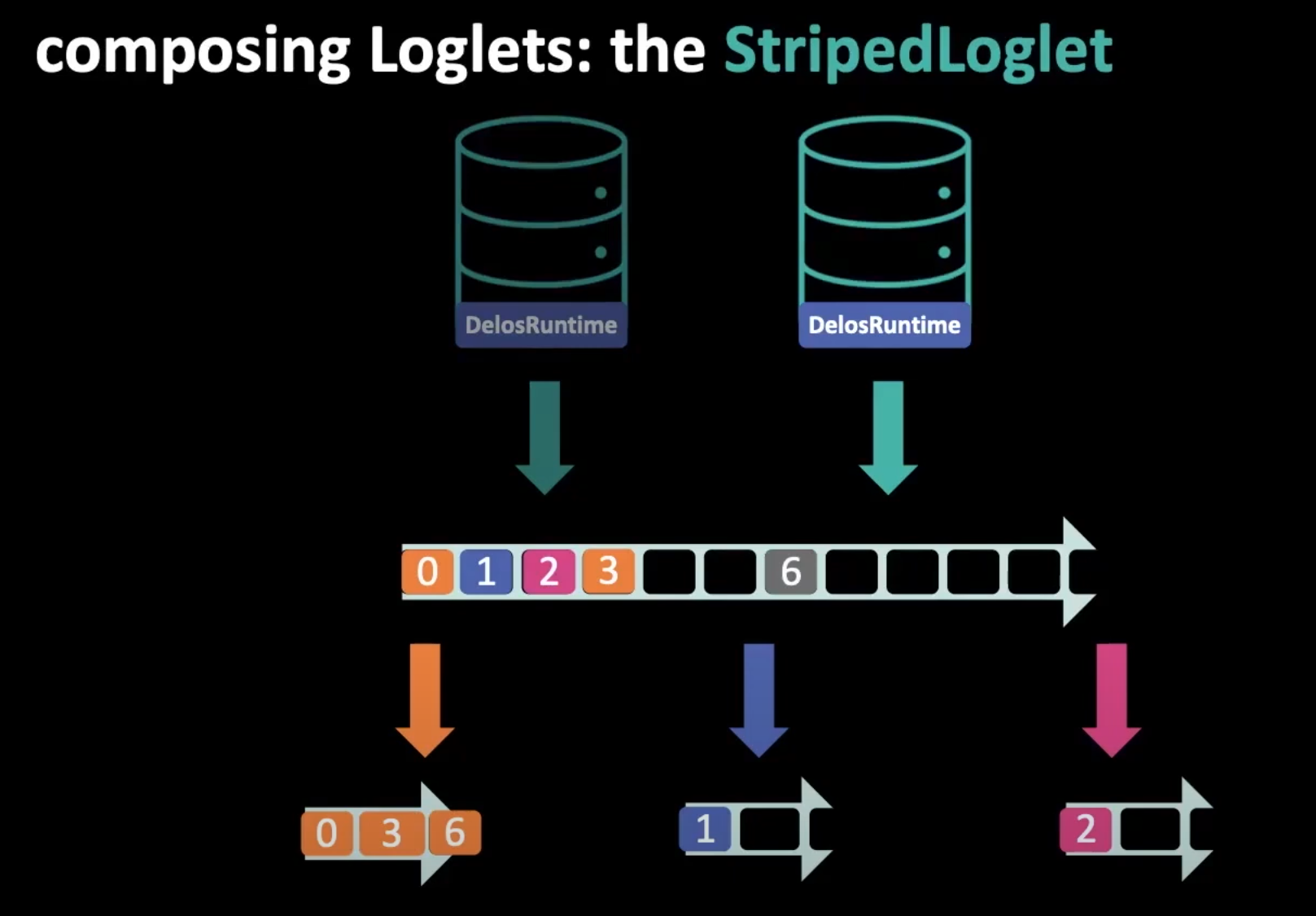

StripedLoglet

To further improve performance, under the VirtualLog abstraction, Delos leveraged striping to create another implementation called StripedLoglet. This implementation combines multiple Loglet instances at the bottom layer; when an Append request arrives, it round-robins the request to each underlying Loglet system, thereby greatly improving performance.

In addition, StripedLoglet allows multiple DelosRuntimes to perform parallel Appends using different strategies, and allows temporary holes to exist. Later, a mechanism similar to a sliding window is used for piggybacked ACKs, further improving performance.

The multiple underlying Loglet systems can share one cluster or be dispersed across multiple clusters as needed.

striped loglet

striped loglet

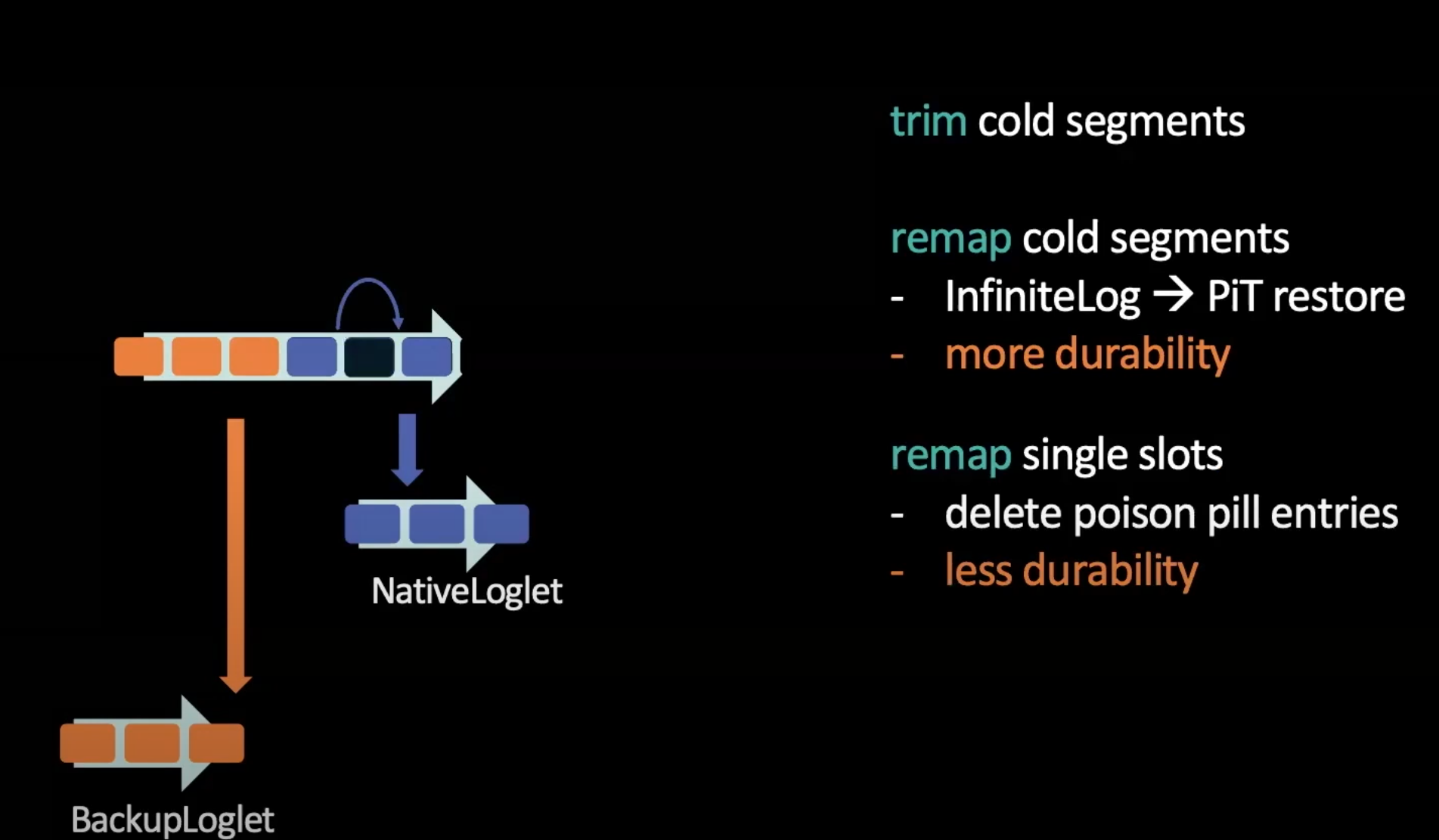

The Last Thing: VirtualLog Trimming

Another detail worth mentioning is the Trim operation provided by VirtualLog. Thanks to the virtualization abstraction, Delos can remove old logs by deleting mappings. Of course, a better approach is to move old logs to a cold cluster for BackupLoglet, then change the mapping to provide an infinite-log abstraction to the outside, thereby allowing fine-grained storage control over different log segments by age.

On the other hand, by modifying the mapping in MetaStore, Delos allows modifying individual log records and deleting certain problematic logs to avoid system hangs or repeated crash-restart cycles. This is something previous consensus protocols could not do.

trimming the VirtualLog

trimming the VirtualLog

Conclusion

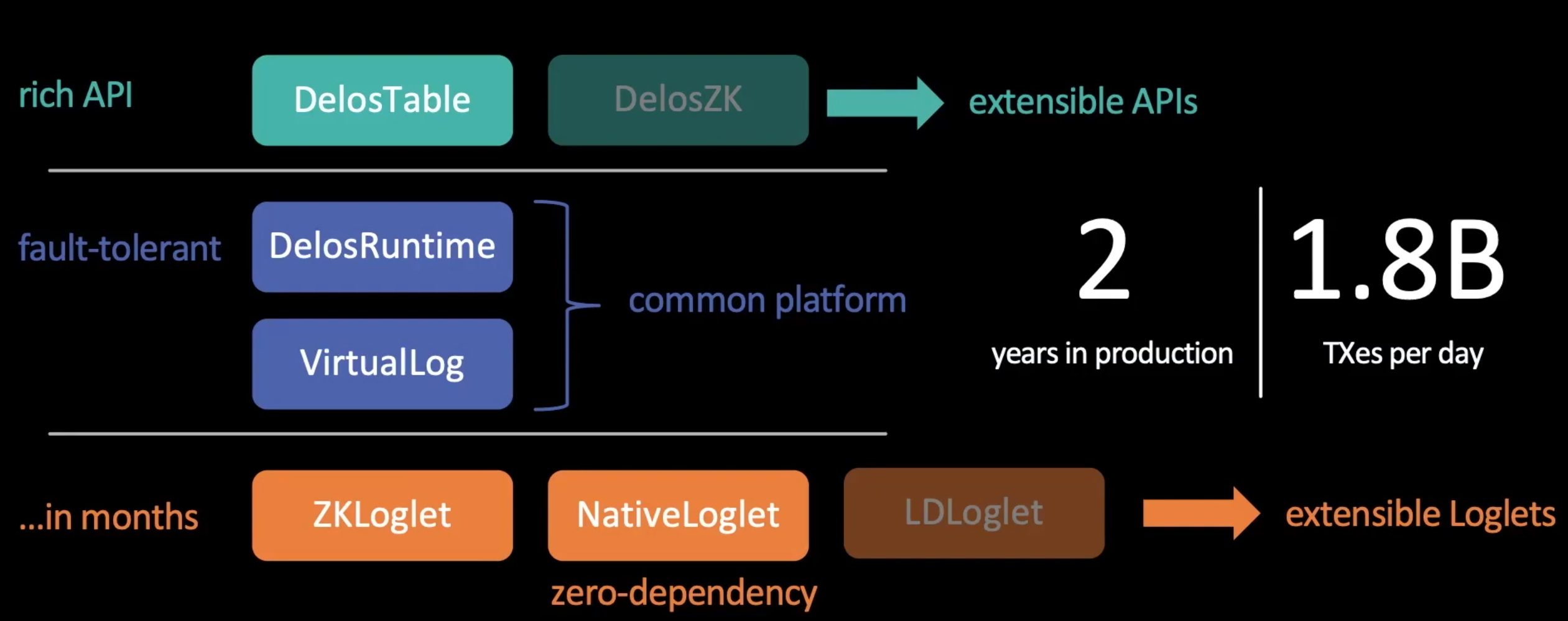

Delos sits at the bottom of Facebook’s system (used for control-plane storage). It adopts a layered design that enables:

- At the beginning of the project, existing systems can be reused in certain layers for rapid rollout and deployment.

- After going live, higher-performance components and newer consensus protocols can be replaced without downtime.

summary

summary

Virtual consensus for distributed systems is somewhat like virtual memory for single-machine systems: through layered decoupling, designers have more room to maneuver when building systems. Whether this idea will live up to its name remains to be seen in time and practice.

References

-

OSDI 20 paper presentation video: https://www.youtube.com/watch?v=wd-GC_XhA2g

-

Facebook Engineering article: https://engineering.fb.com/2019/06/06/data-center-engineering/delos/

-

Paper “Virtual Consensus in Delos”: https://research.fb.com/publications/virtual-consensus-in-delos/