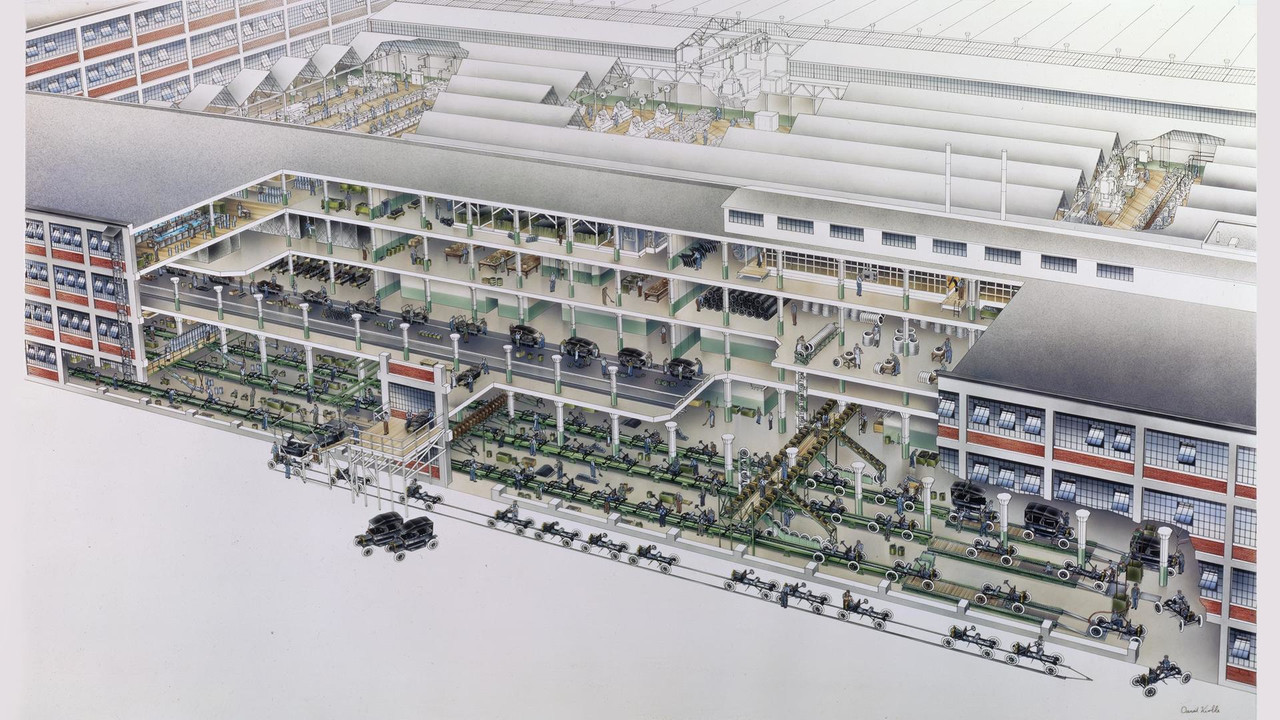

The originator of the “industrial assembly line,” the motor assembly of the Ford Model T, broke the assembly process into 29 steps, reducing assembly time from an average of twenty minutes to five minutes — a fourfold efficiency gain. The image below is sourced from.

This assembly-line philosophy is ubiquitous in data processing. Its core concepts are:

- Standardized data collections: Corresponding to the object to be assembled, this is a consistent abstraction for the inputs and outputs of every stage in data processing. Consistency means that the output of any processing stage can serve as the input to any other processing stage.

- Composable data transformations: Corresponding to a single assembly step, this defines an atomic operation that transforms data. By combining various atomic operations, one can achieve powerful expressiveness.

Thus, the essence of data processing is: for different requirements, read and standardize the data collection, then apply different combinations of transformations.